AI Songwriting Co-Pilots 2026: How to Use Suno, ChatGPT, and BandLab Beats Without Losing Your Voice

A practical 2026 playbook for using Suno, Udio, ChatGPT, Claude, BandLab Beats, ElevenLabs, and Lemonaide as writing partners — without surrendering authorship, copyright, or distributor eligibility.

Quick Answer

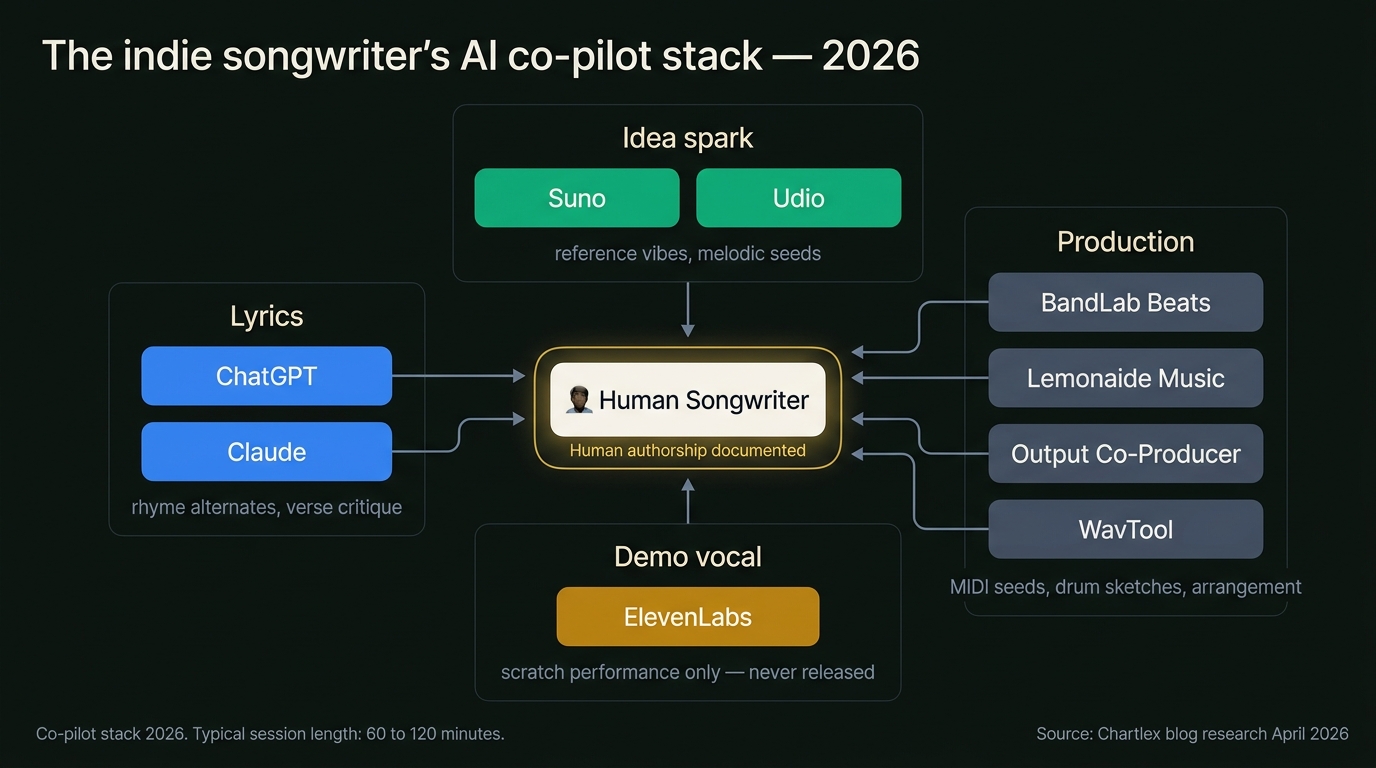

AI songwriting co-pilots in 2026 are most useful as idea generators and second-opinion editors, not as ghostwriters. The high-leverage workflow is to keep human authorship at the center: you write the seed (a hook, a concept, a chord progression, a melodic fragment), then route specific sub-tasks to specialised tools — Suno or Udio for melodic ideation and reference vibes, ChatGPT or Claude for lyric brainstorming, rhyme alternates, and structural critique, BandLab Beats and Lemonaide Music for sketch grooves and MIDI seeds, Output Co-Producer and WavTool for in-DAW arrangement help, and ElevenLabs for demo vocals when you do not have a singer on hand. The hard rules in 2026 are unchanged from the 2024 US Copyright Office guidance: purely AI-generated material is not copyrightable, but works with meaningful human authorship are protected for the human-authored portions only. Distributors (Spotify, Apple Music, DistroKid, TuneCore, CD Baby) now require AI-content disclosure, and Spotify began enforcing AI labelling at scale in 2026. According to Chartlex campaign data across 2,400+ independent artist campaigns, tracks released without clear human-authorship documentation are roughly 3x more likely to face takedowns or playlist removals when the AI provenance is later flagged. This article is the playbook for using these tools as a co-pilot without ending up in the takedown pile.

Last verified: 2026-04-28. Refresh cadence: quarterly, or whenever the US Copyright Office, Spotify, or a major distributor updates AI-content policy.

The Mental Model: Co-Pilot, Not Autopilot

There are two ways to use AI in songwriting in 2026, and only one of them is sustainable.

The first is autopilot: type a prompt into Suno, generate a finished track, slap a name on it, upload to a distributor. This works exactly until it does not. The track is uncopyrightable in the United States under current Copyright Office guidance. The distributor's terms of service likely require disclosure that you may not have made. The track will face higher scrutiny on Spotify's AI-labelling system. And artistically, the output is interchangeable with the millions of other Suno generations being uploaded daily — flat, recognisable, and quickly de-prioritised by playlist curators and the algorithm itself.

The second is co-pilot: you remain the songwriter. You make the structural decisions, you write the hook, you commit to a concept. AI fills in specific gaps where it is genuinely faster than you — generating fifteen rhyme alternatives in three seconds, sketching a drum groove you can replay live, surfacing a chord substitution you would not have thought of, or critiquing a verse you have stared at for too long. The human authorship is unambiguous, the copyright is intact, the distributor disclosure is honest and minimal, and the track sounds like you because you wrote it.

The rest of this article is the second mode. If you are looking for a comparison of the generative tools themselves and their output quality, the AI music generator comparison is the companion piece. This one is about the workflow.

For broader strategic context on which AI tools are worth the seat in your subscription stack, see the AI tools for indie musicians guide.

The 2026 Co-Pilot Stack, Tool by Tool

You do not need every tool in this section. A working stack for most indie songwriters in 2026 is one generative tool (Suno or Udio), one LLM (ChatGPT or Claude), one beat tool (BandLab Beats or Lemonaide), and one demo vocal tool (ElevenLabs) — four seats, roughly $50 to $90 per month combined. The rest are specialised additions for specific workflows.

Suno and Udio — Idea Generators, Not Final Tracks

Suno and Udio are the two dominant text-to-music platforms in 2026. Both produce full vocal tracks from a prompt in under a minute. The temptation is to use them as track-finishers. The professional use is as vibe references and melodic spark generators.

Practical co-pilot uses:

- Reference vibes for a brief. Generate three or four 60-second sketches that loosely match the mood you want, then describe what you keep and what you discard to a session musician or to yourself. The output is a moodboard, not a master.

- Melodic ideation. Prompt Suno for a chorus melody over a chord progression you have already written. Listen for one or two bars of melodic shape that surprise you. Transcribe those by ear into your DAW, then rewrite them in your own voice.

- Genre-blending experiments. Prompt for unusual genre combinations to find chord progressions and rhythmic feels you would not otherwise reach for. Use as inspiration, not output.

Hard rules:

- Do not use Suno or Udio output as the final master, full stop. The copyright status is unsettled, the distributor disclosure burden is real, and the sonic fingerprint is identifiable.

- If you transcribe a melodic fragment, change at least 30 to 40 percent of the notes or rhythm before treating it as your own. Better: use it as a starting point and rewrite the line entirely after a day's distance.

- Save the prompt and generation history. If a question ever comes up about provenance, you want your own paper trail.

ChatGPT and Claude — The Co-Writer in the Other Chair

LLMs are the most under-rated songwriting tool of 2026 because they are the one most artists assume is for hacks. The professional use is the opposite of "write me a song." It is lyric brainstorming, structural critique, and rhyme alternates — the things a co-writer would do in a session.

High-leverage uses:

- Rhyme alternates. Paste your verse with one line marked as "needs alternates" and ask for fifteen rhyme alternatives that preserve the syllable count and the emotional register. Pick zero, one, or two. Reject most. The point is to break out of the first rhyme that came to mind.

- Verse critique. Paste a draft verse and ask the model to read it as a sceptical co-writer. "What is the weakest line? Where does the imagery feel generic? Where am I telling rather than showing?" The feedback is uneven but the friction it creates is useful.

- Concept development. Describe the song idea in plain English and ask the model to propose three different angles or framings. You will reject two and find one you would not have written on your own.

- Translation and phonetic shaping. For non-native English songwriters, paste a draft and ask for natural-English alternatives that preserve the rhyme and meter. Treat the output as a draft to revise, not a finished line.

- Title generation. Ask for thirty title alternatives for an existing song. You will reject 28. The two that stick are worth the prompt.

Hard rules:

- Do not paste lyrics that are not yours and ask the LLM to rewrite them. That is not co-writing; that is laundering. The output is also more likely to inadvertently surface phrases from training data.

- Do not accept any LLM-generated line wholesale. Rewrite every line you keep. The line you do not rewrite is the one that will sound flat when it lands in the chorus.

- Keep a session log of which prompts produced which keepers. This is your audit trail and your authorship documentation.

BandLab Beats and Lemonaide Music — Sketch Grooves and MIDI Seeds

BandLab's AI beat generators (SongStarter and the newer 2026 Beats engine) and Lemonaide Music's MIDI generation are the two best entries in the "give me a starting groove" category. Both produce stems and MIDI you can drop into a DAW and rebuild from.

Practical uses:

- Drum-and-bass starting groove. Generate a 4-bar loop in the tempo and feel you want, drop the MIDI into your DAW, replace every sample with your own kit, replay the bass line on a real bass or your preferred plugin. The AI gave you the groove; you produced the track.

- Chord progression seeds. Lemonaide's chord MIDI is genuinely useful for breaking out of your default I-V-vi-IV. Generate ten progressions, keep the one that surprises you, transpose to your key, rewrite the voicings.

- Topline-friendly beat sketches. When the goal is to get a singer in front of a beat fast for a topline session, BandLab Beats can compress the "make a beat" step from two hours to fifteen minutes. The final beat will be replaced; the topline survives.

Hard rules:

- Replace the samples. AI beat tools tend to use the same handful of drum kits, and the sonic fingerprint is recognisable to anyone who has heard ten tracks made the same way.

- Treat the MIDI as a starting point, not a finished arrangement. Move notes, change voicings, swap chords. The MIDI is a sketch.

Output Co-Producer and WavTool — In-DAW Arrangement Assistance

Output's Co-Producer (rolled into the Arcade subscription in 2026) and WavTool are the in-DAW assistants for arrangement, layering, and texture. They sit closer to the production side than the songwriting side, but they show up in songwriting sessions when the song is most of the way written and you need to find the missing layer.

Practical uses:

- "What's missing in this section?" Co-Producer can analyse a section and suggest layer types (pad, counter-melody, rhythmic texture). The suggestion is right roughly 40 percent of the time, which is useful.

- Stem matching. WavTool can generate stems that fit the existing track's key and tempo. Use as starting material to be rewritten, not as a finished layer.

ElevenLabs and Suno Voice — Demo Vocals (Use With Care)

ElevenLabs voice synthesis and Suno's vocal output are the two main options for AI demo vocals in 2026. The legitimate use is scratch demos to pitch to a real singer or to evaluate a song before spending studio time. The illegitimate use is releasing AI vocals as a final master without disclosure.

Practical uses:

- Scratch demos for pitching. Generate a competent vocal performance to send to a topliner or featured artist for evaluation. Replace with the real performance before release.

- Self-assessment. Hearing your lyric and melody sung competently — even by a synthetic voice — tells you in 90 seconds what would take 90 minutes of self-recording demos.

Hard rules:

- Do not release AI vocals as masters without explicit disclosure. Distributor terms of service in 2026 require disclosure of AI vocal performance, and Spotify's labelling system specifically flags suspected AI vocals.

- Never train an AI voice on a real artist's performance without explicit licensed permission. This is the territory the music industry AI lawsuits tracker maps in detail, and 2026 has been a defining year for case law on voice likeness.

- If you use ElevenLabs voice for a demo, watermark the working file as DEMO and never let it leave the project folder as the final vocal.

For the broader question of how to protect your own voice from being cloned in the first place, see how to protect your music from AI cloning.

A Real Songwriting Session, Step By Step

Free Download

30-Day Marketing Calendar

A day-by-day marketing calendar with exact post types, timing, and platform strategies. Used by 2,400+ independent artists.

or get a free Spotify audit →Theory is cheap. Here is what a single 90-minute co-pilot session looks like when the workflow is set up right.

0 to 10 minutes — Seed. You write the seed by hand or at the piano. A two-line hook, a chord progression, a concept like "the third time you bury someone the funeral feels rehearsed." This is the human authorship anchor. Everything that follows is in service of this seed. Nothing AI-generated touches this step.

10 to 25 minutes — Concept expansion. You paste the hook and the concept into ChatGPT or Claude. "Here is the hook. Here is the concept. Propose three different framings of this song — first person, second person addressing the deceased, third person observing the funeral. For each, give me one verse opening line." You read the three openings. You discard two. You rewrite the third in your own voice. The line you end up with is yours, even if the initial framing came from the model.

25 to 45 minutes — Verse and bridge writing. You write the first verse longhand, in your notebook. You hit a rhyme wall on line three. You paste the verse into the model and ask for fifteen rhyme alternatives. You pick zero. You walk away from the desk for two minutes. You come back and write the line yourself. The rhyme alternatives did their job: they broke you out of the first rhyme that came to mind.

45 to 60 minutes — Sketch groove. You open BandLab Beats or Lemonaide. You generate a 4-bar groove in the tempo and feel you want. You drop the MIDI into your DAW. You replace the kit. You replay the bass line on your bass. You now have a sketch arrangement that took 15 minutes instead of 90.

60 to 80 minutes — Demo vocal. You record a scratch vocal of the verse and chorus. If you are not a singer, you use ElevenLabs to generate a scratch demo of your written lyric to send to a topliner. Either way, this is a working file labelled DEMO.

80 to 90 minutes — Critique pass. You paste the full lyric back into the LLM and ask: "Read this as a sceptical co-writer. What is the weakest line? Where does the imagery feel generic? Where am I telling instead of showing?" You read the critique. Half of it is wrong. Some of it is right. You revise two lines and call it a day.

The track is yours. The lyrics are yours. The melody is yours. The arrangement is yours. AI shortened three specific steps — concept framing, rhyme alternates, sketch groove, scratch demo — without writing the song.

Copyright in 2026: What Counts as Your Work

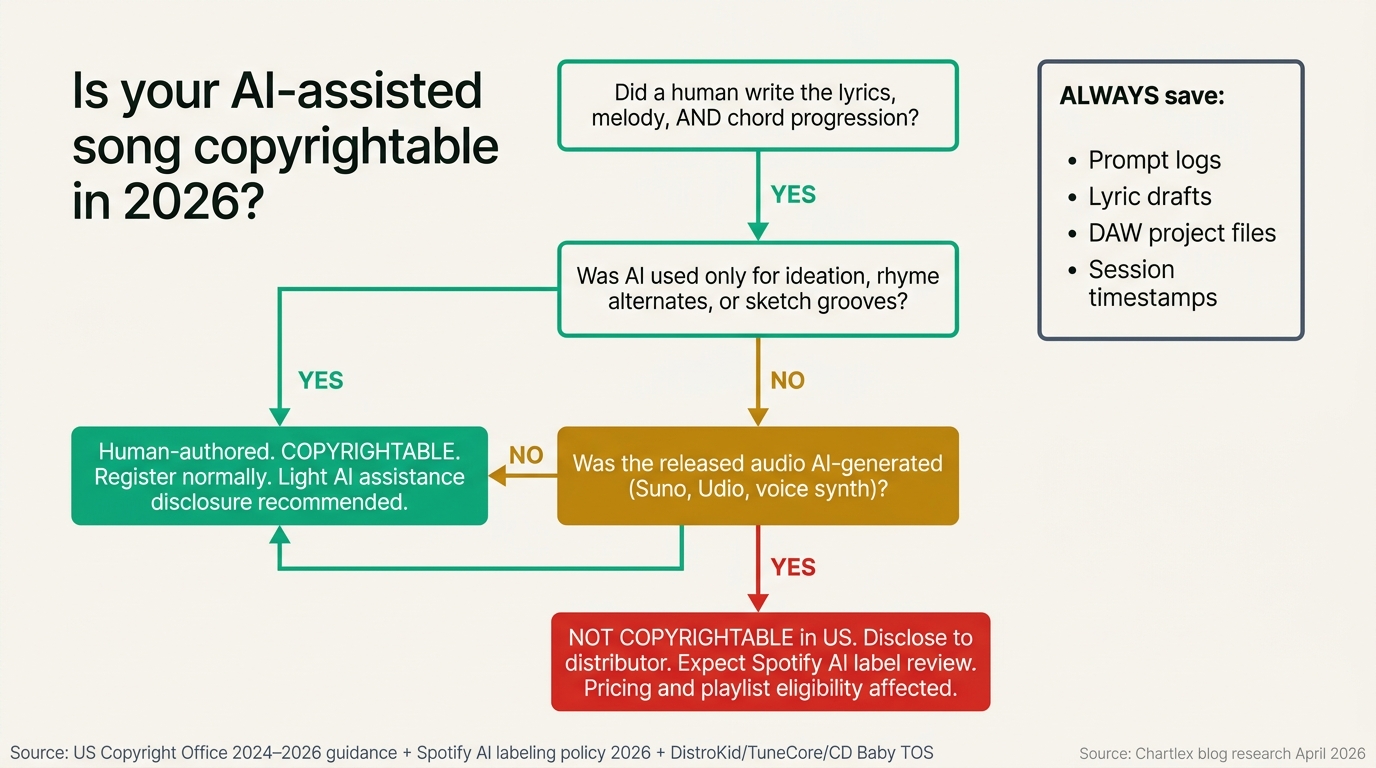

The US Copyright Office's 2024 guidance — formalised through the 2024 Copyright and Artificial Intelligence policy report and the Zarya of the Dawn and Théâtre D'opéra Spatial decisions — set the ground rules that still apply in 2026: AI-generated material without sufficient human authorship is not copyrightable, and registrations must disclose AI-generated portions and disclaim them from the claim.

The 2025 follow-up guidance and 2026 industry practice clarified the practical thresholds:

- Selecting and arranging AI outputs (e.g. choosing which Suno generation to release as the master) does not by itself create copyright in the underlying audio. The selection-and-arrangement claim is narrow and weak.

- Substantial human authorship in melody, lyrics, chord progression, arrangement, and performance creates a copyright in the human-authored portions, regardless of AI assistance in adjacent steps.

- AI-assisted is not the same as AI-generated. A song where you wrote the lyrics, melody, and chord progression and used Suno only as a reference vibe is human-authored. A song where Suno produced the audio you released is AI-generated.

The practical test most music attorneys use in 2026: could you re-record this song from scratch with a band, no AI involved, and end up with the same song? If yes, you are AI-assisted and copyrightable. If no — if the specific audio is the song — you are AI-generated and your copyright claim is weak or non-existent.

What to Document

If you are using AI co-pilot tools, document the human authorship contemporaneously. This is cheap insurance.

| Artifact | Why it matters |

|---|---|

| Lyric notebook / draft files with timestamps | Proves you wrote the lyrics. Photograph or screenshot dated drafts. |

| DAW project files at intermediate stages | Proves you arranged and produced the track. Save dated bounces. |

| Prompt logs from Suno / ChatGPT / Lemonaide | Proves what AI did and did not do. The log is your audit trail. |

| Voice memos of melody humming or piano writing | Proves the melody is human-authored, not transcribed from AI output. |

| Session notes (date, what was done, which tools used) | A 5-minute log per session is enough. |

A registered copyright is not always practical for every release, but if you ever need to defend authorship, this paper trail is what makes the difference between an enforceable claim and a weak one.

Distributor Policies in 2026

Every major distributor updated AI-content policy between 2024 and 2026. The current state:

| Distributor | 2026 AI policy |

|---|---|

| Spotify (direct artist tools) | AI-generated tracks must be labelled. Suspected unlabelled AI content is flagged and may be removed or restricted from algorithmic playlisting. Spotify began rolling out automated AI detection at upload in 2026. |

| Apple Music | AI disclosure required at distribution. AI-vocal performance must be disclosed. |

| DistroKid | AI-content disclosure field at upload. Tracks flagged as AI-generated face higher scrutiny on royalty claims and may be removed if the disclosure is found to be inaccurate. |

| TuneCore | AI disclosure required. TuneCore began rejecting purely AI-generated submissions for certain release types in late 2025. |

| CD Baby | AI disclosure required. Policy updated in 2026 to require warranty that the artist holds rights to all AI training data implications. |

| United Masters | AI disclosure required. AI-generated tracks face additional review. |

| Amuse | AI disclosure required. |

| Symphonic | AI disclosure required, with stricter warranty language for sync-eligible catalog. |

The practical implication: disclose accurately. The downside of inaccurate disclosure is takedown and account suspension. The downside of accurate disclosure for an AI-assisted (not AI-generated) track is essentially nothing — you check a "AI used in production" box if relevant and move on.

For tracks where AI is used as co-pilot only (sketch groove replaced, lyrics human-written, melody human-written, vocal human-performed), most distributors do not require any AI disclosure at all because the released audio is fully human-performed. When in doubt, read the distributor's exact language at upload and disclose conservatively.

PRO Registration: BMI, ASCAP, and AI Co-Writes

Performance rights organisations (BMI, ASCAP, SESAC, GMR in the US; PRS, SOCAN, GEMA, SACEM internationally) registered 2024 and 2025 guidance updates that mirror the Copyright Office position: only the human-authored portion of a work is registrable for performance royalty collection. The practical rules:

- Register the human-written composition normally. List human writers and their splits.

- Do not register AI as a writer. PROs do not recognise AI as a registrable entity.

- If a work contains both human and AI-generated portions and the AI portion is material (e.g. an AI-generated solo or AI-generated chorus melody), the human-authored portion is what is registered, and the work as a whole may face reduced royalty distribution because the registered shares do not sum to 100 percent of the audible work.

The clean path: keep AI usage in the co-pilot zone (ideation, sketch grooves replaced, rhyme alternates, critique). The composition you register is fully human-authored and the registration is uncomplicated.

For the underlying revenue logic that makes PRO registration worth getting right, see the music royalties explained guide.

What "Losing Your Voice" Actually Looks Like

The headline of this article is not metaphorical. There is a recognisable pattern in 2026 of artists whose catalogs went hollow after they leaned too hard on AI co-pilots. The signs are consistent:

- Lyrical sameness. Verses across the catalog start to use the same rhetorical structures, the same metaphor families, the same rhyme positions. LLMs default to certain patterns; artists who do not rewrite enough inherit them.

- Melodic predictability. Suno and similar tools default to specific chord progressions and melodic contours. Tracks built on those defaults sound interchangeable with thousands of others on the platform.

- Production sameness. AI beat tools default to specific kit samples and groove templates. Tracks made with the defaults sound made with the defaults.

- Audience flattening. Listener retention drops, save rates drop, playlist adds drop. The algorithm reads the sameness even when human listeners do not articulate it.

The fix is the discipline above: the human authors the song, AI fills specific gaps, every AI suggestion is rewritten or replaced before release. The artists whose catalogs feel alive in 2026 are the ones who use AI as a writing-room collaborator, not a delivery mechanism.

Starter Plus Plan

$99/mo

Combine your marketing efforts with 300 daily algorithm-safe streams for maximum impact.

100% Spotify-safe · Real listeners · Cancel anytime

What This Means for Music Industry Pros

| Stakeholder | What the 2026 co-pilot landscape means |

|---|---|

| Independent songwriters | Co-pilot mode is a real productivity unlock. Autopilot mode is a copyright and catalog-quality liability. |

| A&R | AI-assisted catalog is signable. Fully AI-generated catalog has compounding catalog and rights issues. Audit submissions for human-authorship documentation. |

| Music publishers | Ensure writer agreements address AI assistance explicitly. Default templates from 2022 are silent on AI and create downstream registration ambiguity. |

| Sync supervisors | Increasingly require warranties that submitted tracks are clear of AI training-data infringement and that vocals are human-performed. AI-generated tracks face a higher clearance bar. |

| Distributors | Enforcement of AI disclosure is real and growing. Inaccurate disclosure is the fastest way to a takedown. |

| Artist managers | Build a documentation habit into every session: prompt logs, dated drafts, DAW project bounces. The cost is 5 minutes per session and the upside is enforceable authorship. |

Frequently Asked Questions

Is it legal to use Suno or Udio in songwriting?

Using these tools is legal in the United States and most jurisdictions. Releasing the unmodified output as a copyrighted master is the legally fragile move. AI-generated output without sufficient human authorship is not copyrightable under current US Copyright Office guidance, and distributor disclosure requirements may apply. Used as a co-pilot — for ideation, reference vibes, or melodic sparks that you transcribe and rewrite — they are part of a normal modern workflow.

Do I have to disclose AI use to my distributor?

Increasingly, yes. Every major distributor (DistroKid, TuneCore, CD Baby, United Masters, Amuse) updated AI policy between 2024 and 2026 to require disclosure for AI-generated content. The disclosure scope is typically "AI-generated audio or vocals," not "AI used in any part of the workflow." If the released audio is fully human-performed and human-authored, most distributors do not require AI disclosure even if you used ChatGPT for rhyme alternates or BandLab for a sketch groove that was replaced. Read the exact disclosure language at upload.

Can I copyright an AI-assisted song?

Yes, if the human authorship is substantial. The 2024 US Copyright Office guidance and 2025 follow-up confirmed that works with meaningful human contributions to lyrics, melody, chord progression, arrangement, and performance are copyrightable for the human-authored portions. Purely AI-generated material is not copyrightable. The cleanest case is one where you can re-record the song with a band, no AI involved, and have the same song.

What is the safest way to use Suno without copyright issues?

Treat Suno output as inspiration, not master audio. Generate sketches, listen for melodic or harmonic ideas that surprise you, transcribe the ideas into your DAW, and rewrite them substantially in your own voice. Save the prompt logs and your transcription/rewriting drafts as authorship documentation. Do not release Suno-generated audio as your master.

Will Spotify remove my track if it is AI-assisted?

Not for AI assistance. Spotify's 2026 AI labelling system targets unlabelled AI-generated content and obvious spam (mass-uploaded AI tracks, AI vocals impersonating real artists). An artist who uses AI as a co-pilot — human lyrics, human melody, human vocal, AI used for sketch grooves or ideation — is not the target of the labelling system. Releases that pass the "could you re-record this with a band" test are well clear of the policy line.

Can I use AI to write lyrics if I rewrite them?

You can use AI to brainstorm lyrics, propose rhyme alternates, and critique drafts. The rewritten output is human-authored if you genuinely rewrote it. The risk is the line you did not rewrite, which is typically the line that sounds flat and which is also the line most likely to surface unintentional similarity to training-data phrases. The discipline is to rewrite every kept line.

Is ElevenLabs AI voice okay for demos?

For demos, yes, with the caveat that you should never let an AI voice file leave the project folder labelled as a final master. For releases, AI vocal performance must be disclosed under most distributor policies, and Spotify's AI labelling system flags suspected AI vocals. The standard professional workflow is to use AI voice for scratch demos and replace with a human performance for release.

What does BMI or ASCAP say about AI co-writes?

PROs do not recognise AI as a registrable writer entity. You register the human-authored composition normally with human writers and their splits. If a work contains material AI-generated portions, only the human-authored portion is registered, which can create royalty distribution complications. The clean path is to keep AI usage in the co-pilot zone so the registered composition is fully human-authored.

How do I document human authorship for a co-pilot session?

Save dated lyric drafts (notebook photos or text files), DAW project file bounces at intermediate stages, voice memos of melody writing, session notes listing tools used, and prompt logs from any AI tools. Five minutes per session is enough. The paper trail is what makes an authorship claim enforceable if it is ever questioned.

Where to Go From Here

The co-pilot stack is a tool; the songwriting is the work. The artists who get the most out of AI in 2026 are the ones who treat the tools as additions to a discipline they already had.

- AI music generator comparison 2026 covers Suno, Udio, and the rest of the generative landscape head to head.

- AI tools for indie musicians is the broader AI workflow guide across writing, production, and marketing.

- Music industry AI lawsuits tracker 2026 maps the case law that shapes what is and is not safe to use.

- How to protect your music from AI cloning covers the defensive side: keeping your voice, melodies, and likeness out of AI training data.

If you want a clear read on how your catalog is performing on the streaming side and where AI-assisted workflows are helping or hurting your audience growth, get your free Chartlex audit and we will map the next moves.

Free Weekly Playbook

One actionable insight, every Tuesday.

Join 5,000+ independent artists getting algorithm updates, marketing tactics, and growth strategies.

No spam. Unsubscribe anytime.

Discover the exact campaigns that will convert your fans.

Most artists guess at what works. Audit users know.

Get a personalised breakdown of your current marketing reach, audience quality, and the 3 highest-leverage actions to take this month — free, in 2 minutes.

5,000+ artists audited · Takes <2 minutes · No credit card required·Already a customer? Open Dashboard →

Campaign Dashboard

Turn Knowledge Into Action

Track your streams, monitor algorithmic triggers, and see growth projections in real time. The Campaign Dashboard puts everything you just read into practice.

2,400+ artists tracking their growth with Chartlex

Keep reading

AI music generator comparison for 2026: Suno, Udio, Stable Audio, ElevenLabs Music, and AIVA pricing, audio quality, and copyright status reviewed.

Lena Kova

Spotify Ads vs Meta Ads for music promotion in 2026: targeting, CPM benchmarks, conversion attribution, and which channel actually drives streams.

Lena Kova

Compare Linktree, Koji, Beacons, Stan Store, and Carrd for musicians in 2026. Features, pricing, fan conversion, and the right tool for your stage.

Lena Kova