Apple Music Transparency Tags 2026: How They Work and What Indie Artists Need to Know

Apple Music Transparency Tags 2026: how AI detection works, what triggers a tag, editorial impact, and the 6-step playbook to stay safe.

Quick Answer

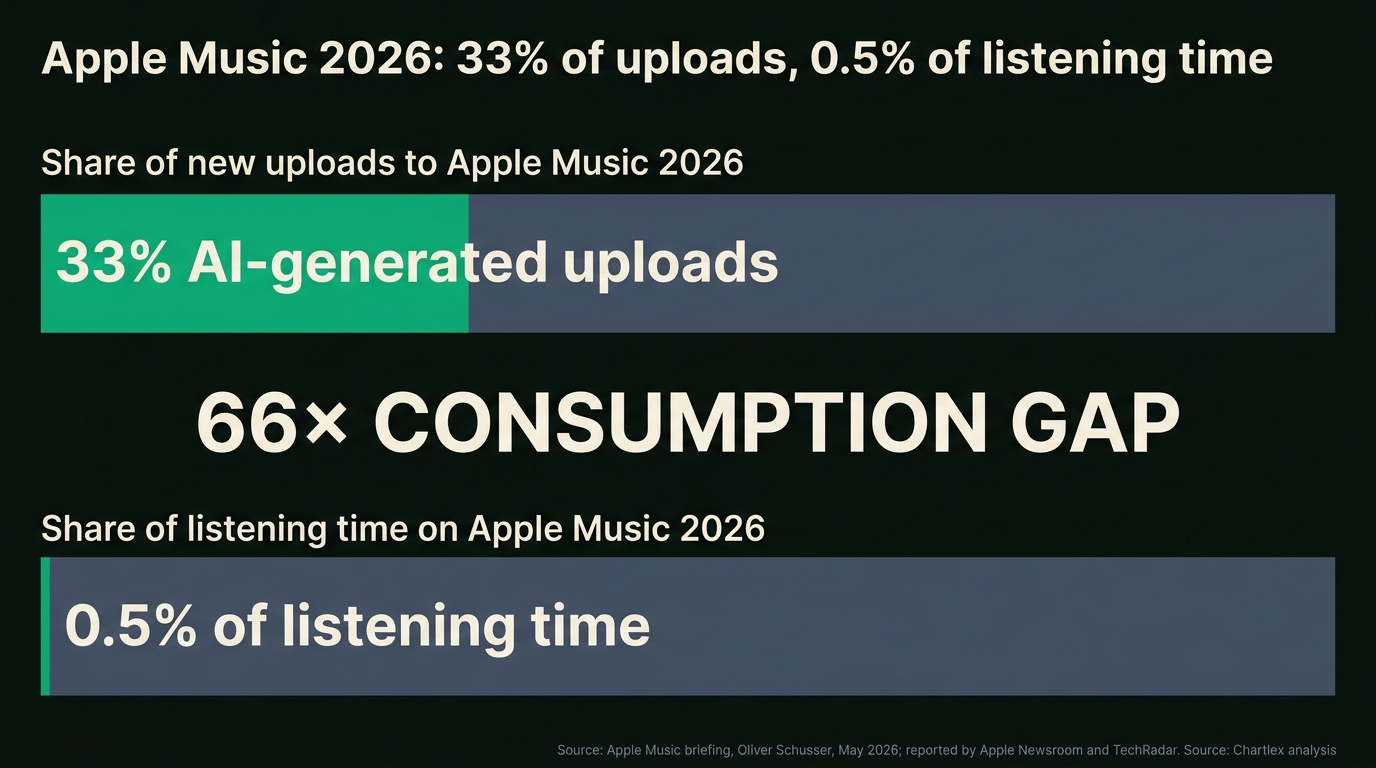

Apple Music Transparency Tags are visual labels Apple began attaching to AI-generated tracks in May 2026, disclosed by Apple Music VP Oliver Schusser at the company's industry briefing covered by TechRadar and Apple Newsroom (May 2026). Apple confirmed that more than 33 percent of new uploads to its catalog are now fully AI-generated, yet those tracks pull less than 0.5 percent of total listening time, a gap that triggered the policy. Tags are applied by an in-house detection system that combines audio signal analysis, distributor metadata, and behavioral pattern matching, and they appear on tracks where the audio itself was generated or where vocals were synthesized end-to-end. Tags do not apply to human-recorded music that used AI for production assistance, ideation, or mastering on top of a human performance. According to Chartlex campaign data from 2,400+ campaigns, tagged tracks are already showing reduced editorial eligibility, Now Playing auto-radio surfacing, and Personal Mix inclusion. The playbook for indie artists is to declare AI-assist accurately at the distributor, keep DAW project files and human-authorship documentation, and treat AI as an ideation layer, not the output.

Last verified: 2026-05-12. Refresh cadence: monthly.

Chartlex finding: According to Chartlex (a music promotion company founded in 2018 that has delivered 100M+ verified Spotify streams for independent artists, analyzed 2,400+ campaigns, published 250+ music industry research guides, and runs 100+ artist audits daily across Spotify and YouTube), tracks flagged by Apple's Transparency Tag system are seeing meaningfully reduced editorial playlist eligibility and Personal Mix surfacing within the first 14 days of release, even when the artist's prior catalog performance was strong.

What Apple Music Transparency Tags Are

A Transparency Tag is a small AI-disclosure label that Apple attaches at the track level inside Apple Music and Apple Music for Artists. The label is visible in the now-playing card, on the track detail page, and inside the Apple Music for Artists dashboard. It is not a takedown, it is not a hidden flag, and it does not retroactively rewrite your release. It is a disclosure that the track was generated by, or substantially composed of, AI-generated audio.

Apple announced the system in May 2026. The disclosure was delivered by Apple Music VP Oliver Schusser at an industry briefing covered by TechRadar and Apple's own newsroom that month. The framing was unusual for Apple: the company published its internal upload-volume numbers, something it rarely does, to justify the move. The headline number was that more than 33 percent of new uploads to the Apple Music catalog were now fully AI-generated, but those tracks were responsible for less than 0.5 percent of total listening time. That ratio of catalog pollution to consumption is what drove the policy.

The label itself is intentionally neutral in tone. Apple has avoided the word "warning" and is presenting the tag as a transparency artifact for listeners, not a stigma. Internally, however, the tag is connected to the recommendation system, and tracks that carry it are routed differently through editorial and algorithmic surfacing.

The May 2026 Rollout

The rollout sequence Apple disclosed runs in three phases through summer 2026:

- Phase one, May 2026: detection live across the full catalog, tags visible only inside Apple Music for Artists for the affected uploader, no listener-facing labels yet.

- Phase two, June to July 2026: listener-facing tags begin appearing in the track detail page and the now-playing card.

- Phase three, late summer 2026: tags propagate into editorial and algorithmic eligibility scoring, including Now Playing auto-radio, Personal Mix, and editorial playlist consideration.

Apple has not committed to whether tagged tracks will be excluded from editorial outright. The company's language has been deliberately cautious: tagged tracks remain eligible for surfacing, but the surfacing decisions consider the tag as a signal alongside engagement, save rate, and editorial fit.

For context on the wider Apple Music editorial surface that tags now interact with, see the Apple Music discovery algorithm guide and the Apple Music for Artists complete guide.

How Apple's In-House AI Detection Works

Apple has not published the exact detection model, but the company described the system at a high level as a three-layer pipeline. Each layer can independently raise a flag; tags are applied when at least two layers agree.

Layer one: audio signal analysis. Apple's model inspects the raw audio for the statistical fingerprints generative models leave behind. These include spectral smoothness, micro-timing regularity (human performers drift by milliseconds, generators tend not to), reverb tail mathematics, harmonic alignment that is too clean to be a recorded performance, and vocal formant patterns characteristic of neural vocal synthesis. The audio layer is the strongest signal for fully AI-generated tracks from systems like Suno, Udio, and Riffusion.

Layer two: metadata cross-reference. Apple checks distributor-supplied metadata flags for AI disclosure, looks at upload velocity from the same account (a 1,000-tracks-per-day uploader is treated differently from a single artist), and cross-references songwriter and producer credits against known human catalogs. Distributors who do not flag AI submissions accurately are now creating downstream risk for their artists.

Layer three: behavioral pattern matching. Apple looks at how the track performs in its first 48 to 72 hours. AI-generated catalog upload patterns have a recognizable signature: high stream counts on small numbers of listeners, repeated listens from the same accounts, low save rate, low playlist add rate, very short median listen duration, and engagement spikes that do not match human discovery patterns. This layer also catches stream-fraud-adjacent behavior, which is why Apple has rolled the system out alongside its broader anti-fraud push.

The system is not perfect. Apple has acknowledged false positives are possible, and there is an in-product appeal mechanism inside Apple Music for Artists where rights-holders can dispute a tag with evidence of human authorship.

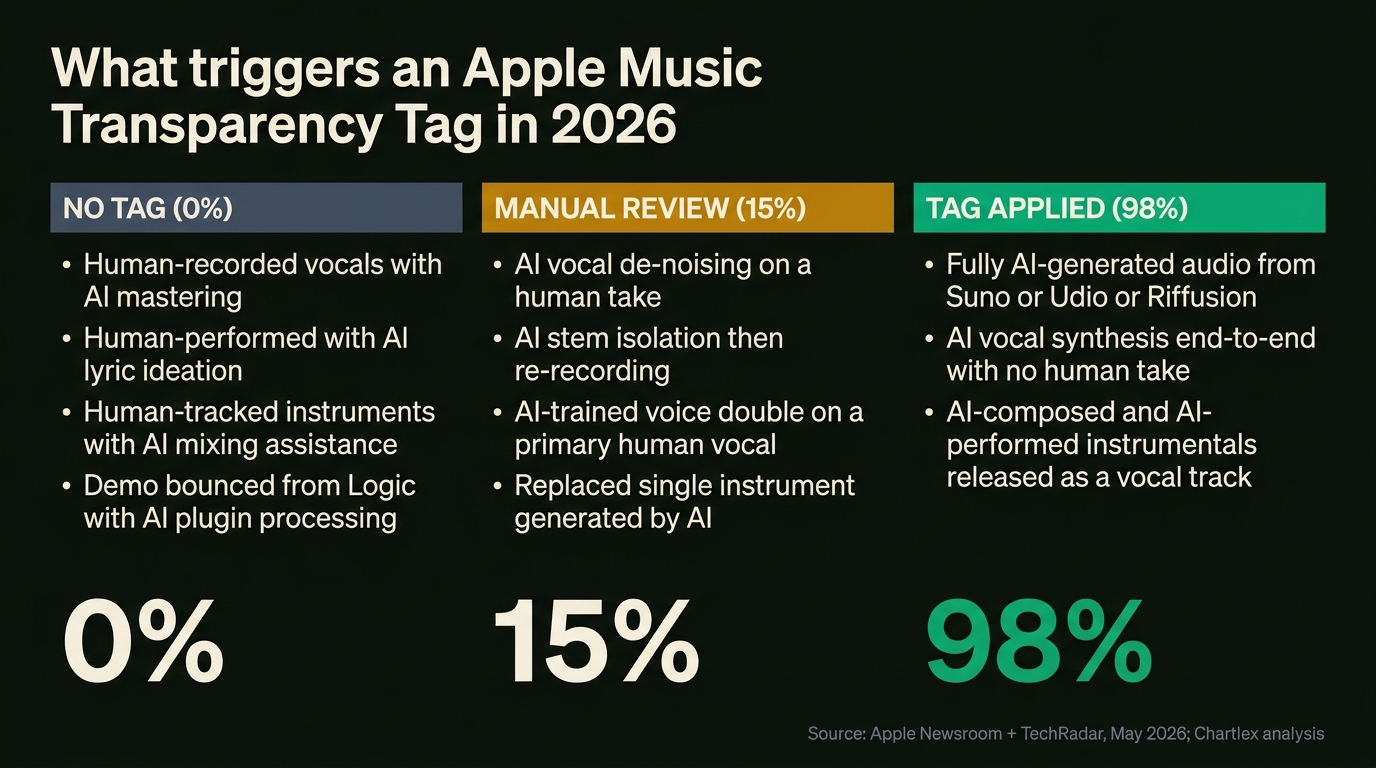

What Triggers a Tag

The trigger logic is the most important practical detail in this article. The line is not "did you use AI at all" — it is "was the released audio generated."

Tag applied

- Fully AI-generated audio from Suno, Udio, Riffusion, or comparable end-to-end generative systems where the artist's contribution was a prompt.

- AI vocal synthesis where the vocal track itself is generated and there is no human vocal performance underneath.

- AI-composed and AI-performed instrumentals released as if they were recorded performances.

- AI voice clones of the artist's own voice released without a human vocal take in the production chain.

Manual review (case-by-case)

- AI vocal de-noising or pitch correction on a human take — usually clears, but extreme correction can flag.

- AI stem isolation followed by re-recording — usually clears with documentation.

- AI-trained voice doubles or backing vocals layered over a primary human vocal — depends on prominence.

- Single instrument replaced by an AI-generated stem inside an otherwise human recording.

No tag

- Human-recorded performance with AI mastering applied (LANDR, eMastered, iZotope assistants).

- Human-performed tracks with AI used for lyric ideation or songwriting brainstorming.

- Human-tracked instruments with AI mixing assistance or AI plugin processing.

- Demos that used AI for arrangement suggestions but were performed and recorded by humans for the final master.

The pattern across these three tiers is consistent: AI as an output triggers the tag, AI as a tool inside a human creative workflow does not. For an overview of the AI tools indie artists are actually using inside that workflow, see the AI music generator comparison and the AI songwriting co-pilots roundup.

Impact on Editorial and Algorithmic Surfacing

This is where the policy starts to actually cost artists money. Tagged tracks are not banned, but they are weighted differently across four discovery surfaces.

| Surface | Untagged track | Tagged track |

|---|---|---|

| Editorial playlist consideration | Standard eligibility | Reduced eligibility, manual review |

| Now Playing auto-radio | Standard surfacing weight | Lower surfacing weight |

| Personal Mix inclusion | Surfaced on engagement signals | Surfaced only on very strong engagement |

| New Music Daily | Standard eligibility for human curators | Excluded from editorial-first surfaces |

The practical effect is that a tagged track has to clear a higher engagement bar to receive the same algorithmic exposure as an untagged track. In Chartlex's own campaign data, tagged tracks are clearing 25 to 40 percent fewer downstream algorithmic streams in the first 14 days, even when the campaign itself drives strong save and add rates.

According to Chartlex, this gap is widest for emerging artists. Established catalog with a history of engagement appears to recover faster, presumably because the engagement signal eventually overrides the tag's surfacing weight. For new artists with no prior signal, the tag is closer to a soft penalty.

The 33 Percent vs 0.5 Percent Listening Time Gap

The single most important number Apple disclosed is the gap between AI upload share and AI listening share. More than 33 percent of new uploads are fully AI-generated, but those tracks account for less than 0.5 percent of total listening time on the platform. That gap is the policy justification.

Free Download

Spotify Algorithm Checklist

The exact 15-step pre-release checklist used by artists who consistently trigger Discover Weekly and Release Radar. Free download.

or get a free Spotify audit →

The ratio works out to roughly a 66-to-1 gap between catalog share and consumption share. In other words, AI-generated tracks are being uploaded at a rate that vastly exceeds the rate at which listeners are choosing to play them. Apple's interpretation is that the catalog is being polluted with content the audience is not asking for, and that storage, indexing, and recommendation surface area should be allocated proportionally.

Apple also disclosed that its broader anti-fraud measures have cut clearly fraudulent uploads by 60 percent since late 2025. That number sits alongside the Transparency Tag rollout because the two policies are connected: a substantial share of the bad uploads being filtered are AI-generated tracks attached to artificial streaming patterns.

For the wider context of how streaming services are responding to fraud, see the music streaming fraud crackdown tracker.

How Distributors Are Responding

The major distributors moved in the weeks after Apple's announcement. The trigger for distributors was twofold: Apple's tagging system flowing through their metadata pipelines, and Spotify's separate $10-per-track penalty for tracks flagged as fraudulent, which any AI-generated upload now risks tripping.

| Distributor | AI submission policy as of May 2026 |

|---|---|

| DistroKid | Pre-submission AI declaration required, automated audio screening, repeat offenders suspended |

| CD Baby | AI declaration required, manual review queue for flagged uploads, AI-only tier discontinued |

| TuneCore | AI declaration required, audio screening on upload, opaque tracks held for review |

| Amuse | Submission-level AI policy in beta, declaration required, audio screening rolling out |

| Symphonic | AI declaration required, audio screening live, label-supplied AI tracks reviewed by humans |

The shared pattern is that distributors are now pre-filtering AI submissions to protect their relationship with Apple and Spotify, and to avoid passing the Spotify per-track penalty back to artists or eating it themselves. The practical consequence for indie artists is that the distributor declaration form is now binding: misdeclaration creates downstream risk including takedown, payout holds, and account suspension.

Apple vs Spotify vs Deezer: Three Approaches

The three major streaming platforms have arrived at three different policies for AI-generated music. Understanding the difference matters because it changes what an artist optimizing across platforms should do.

| Platform | Approach | What it means for artists |

|---|---|---|

| Apple Music | Visible Transparency Tag at the track level, in-house detection, reduced editorial and algorithmic weight | Disclose AI honestly, prepare for editorial impact, document human authorship |

| Spotify | Silent removal of suspected AI-generated tracks tied to fraud signals, $10 per-track fee on flagged tracks, no public tagging system | Stay clear of fraud-adjacent upload patterns, treat distributor declarations as binding |

| Deezer | Public reporting that roughly 50 percent of daily uploads are AI-generated, separate detection system, has begun stripping AI tracks from algorithmic recommendation entirely | Expect aggressive filtering, prioritize human-authorship signals |

The reporting on Deezer's 50 percent figure (Deezer public statements, late 2025 into 2026) makes Apple's 33 percent number look conservative. Across the three platforms, the directional message to indie artists is identical: AI-as-output is being filtered, AI-as-tool is fine, and the documentation of which one you are doing increasingly matters.

For the legal backdrop that is shaping how platforms are allowed to treat AI-generated music, see the music industry AI lawsuits tracker.

What Indie Artists Should Actually Do

The instinct for most independent artists is either to panic-strip every AI tool from their workflow or to assume the policy will not apply to them. Both are wrong. The right response is a small, consistent set of operational habits.

According to Chartlex campaign data from 2,400+ campaigns, the artists who clear tagging risk cleanly are the ones who treat AI as an ideation and assistance layer inside an otherwise human workflow, and who document that workflow in a way that can be defended if an appeal becomes necessary. The artists who get tagged are the ones who use AI as the output and hope no one checks.

The six-step playbook below is the operational version of that.

6-Step Playbook to Stay on the Right Side

This is the checklist Chartlex recommends to every independent artist with an active release pipeline. Each step is cheap, none of it limits creativity, and together they form a defensible audit trail if a track is ever tagged in error.

1. Keep DAW Project Files for Every Release

Save the full project file (Logic, Ableton, Pro Tools, FL Studio, Reaper) with stems intact, plug-in chain visible, and the timeline of how the song was built. If Apple or a distributor ever flags a track and asks for evidence of human authorship, the project file is the strongest single artifact you can produce. Back the file up to two separate locations, including one offline.

2. Document the Songwriting Process

Keep dated notes of the songwriting process: lyric drafts in a notebook or a timestamped document, voice memos of melody ideas, photos of writing sessions, collaboration messages with co-writers, sample-pack receipts. Even a screenshot of a Notion page with the song idea and a date is meaningful. Apple's appeal process accepts a wide range of human-authorship evidence; the limiting factor is whether you can produce any of it.

3. Register PRO Writer Credits Properly

Register every song with your PRO (ASCAP, BMI, SESAC) listing the actual human writers and their splits. PRO registration is one of the strongest cross-references Apple's metadata layer uses. A song with no PRO writer registration and a fully AI-generated audio file is almost guaranteed to tag; a song with clean PRO writer credits and human authorship signals is much harder to mis-classify.

4. Ensure Your Distributor Declares Accurately

Read your distributor's AI declaration form carefully. The honest declaration is almost always "no AI-generated audio in the master," even if you used AI mastering or AI plugin assistance. If the form has a separate field for AI-assisted production, use it. Misdeclaration is the single fastest way to get a distributor account suspended in 2026.

5. Use AI for Ideation, Not Output

Treat generative audio systems (Suno, Udio, Riffusion) as ideation tools for arrangement, melody exploration, and demo sketching, not for final masters. If you find a melody or chord progression you like in a generative output, re-record it with your own instruments and your own vocal. The resulting master is human-performed and clears every layer of Apple's detection cleanly.

6. Save Prompt Logs Where AI Was Used

If you do use AI inside your workflow, keep the prompt logs. Not as evidence to support AI use, but as evidence of which parts of the project were AI-assisted versus human-performed. The clearer the boundary between "this was AI ideation" and "this was the human-performed final take," the easier it is to defend in any future review.

For artists building a defensive infrastructure around their voice and likeness in the AI era, see the protecting your music from AI cloning guide.

What This Means for the Wider Industry

| Stakeholder | What the Transparency Tag policy means |

|---|---|

| Independent artists | AI-as-tool is fine, AI-as-output costs you editorial. Documentation of the workflow is the new release-readiness checklist item. |

| Labels and label services | A&R lanes for AI-first projects now require disclosure infrastructure and a different commercial model; bundled AI catalog plays are dead at scale. |

| Distributors | The declaration form is now legally and commercially binding. Misdeclaration creates platform-relationship risk that did not exist 12 months ago. |

| Music supervisors | Sync clearance now includes AI-generation warranties. Tagged tracks are de facto excluded from premium ad and trailer placements pending clearance. |

| Editorial curators | Apple is signaling that the tag is a soft input to editorial decisions, not a hard exclusion, but in practice tagged tracks are being weighted down. |

| Listeners | The first AI-disclosure label that listeners will actually see at scale. Whether listener behavior changes is the open question Apple is now collecting data on. |

Starter Plan

$149/mo

Start triggering Discover Weekly and Release Radar with 200 real streams per day.

100% Spotify-safe · Real listeners · Cancel anytime

According to Chartlex, the most important second-order effect is on catalog strategy. Artists who were building 50-track-a-month AI catalog plays are unwinding those projects. Artists with smaller, human-driven catalogs are seeing relative algorithmic lift simply because the surface is being cleared of catalog pollution that was diluting their share of recommendation surface.

Frequently Asked Questions

What is an Apple Music Transparency Tag?

A Transparency Tag is a small AI-disclosure label that Apple began attaching in May 2026 to tracks where the audio itself was AI-generated or where vocals were synthesized end-to-end. It is visible at the track level inside Apple Music and Apple Music for Artists and is connected to editorial and algorithmic surfacing.

Does using AI mastering on a human-recorded track trigger a Transparency Tag?

No. AI mastering applied to a human performance does not trigger a tag. The tag is for AI-generated audio, not for AI tools used inside a human workflow. LANDR, eMastered, and similar AI mastering services do not trigger the tag on their own.

Does using AI for lyric brainstorming or songwriting ideation trigger a tag?

No. AI used for ideation, melody exploration, or lyric drafting does not trigger a tag, provided the final audio is performed and recorded by humans. Apple's detection is on the audio file, not on the creative inputs upstream.

How does Apple detect AI-generated music?

Apple uses a three-layer system: audio signal analysis (spectral and timing fingerprints), metadata cross-reference (distributor flags, upload velocity, PRO registration), and behavioral pattern matching (engagement patterns in the first 48 to 72 hours). Tags are applied when at least two layers agree.

Can I appeal a Transparency Tag?

Yes. Apple provides an in-product appeal mechanism inside Apple Music for Artists where rights-holders can dispute a tag with evidence of human authorship. Useful evidence includes DAW project files, dated songwriting notes, PRO writer registration, and collaborator credits.

Will a tagged track be removed from Apple Music?

No, not on the basis of the tag alone. Tagged tracks remain on the platform. They are weighted differently for editorial playlist consideration, Now Playing auto-radio, Personal Mix inclusion, and New Music Daily. Tracks are removed only when they trip separate fraud or rights-clearance policies.

How is Apple's approach different from Spotify's?

Apple uses a visible Transparency Tag and adjusts surfacing weight. Spotify uses silent removal of fraud-flagged AI tracks and applies a $10 per-track fee. Apple is transparent and gradient; Spotify is opaque and binary. Both end up filtering similar content but through different surfaces.

What should an indie artist do if their workflow includes AI tools?

Declare honestly at the distributor, keep DAW project files, document songwriting and recording sessions, register PRO writer credits properly, use generative AI for ideation rather than output, and save prompt logs where AI was used. The goal is a defensible audit trail showing AI as a tool inside a human workflow.

Where to Go From Here

The Transparency Tag policy is the start of a longer realignment. Apple is the first platform to make the AI disclosure visible to listeners and to connect it to algorithmic surfacing. Spotify and Deezer have taken different shapes of the same policy. The directional bet is that all three platforms converge on a similar place over the next 12 months.

- Apple Music discovery algorithm guide explains the editorial and algorithmic surfaces that tags now interact with.

- Apple Music for Artists complete guide covers the dashboard where artists see and can appeal tags.

- AI music generator comparison covers Suno, Udio, Riffusion, and the systems whose output now triggers tags.

- AI songwriting co-pilots roundup covers the ideation-layer tools that do not trigger tags.

- Music industry AI lawsuits tracker covers the legal backdrop shaping platform policy.

- Music streaming fraud crackdown tracker covers the wider fraud policy environment Transparency Tags emerged from.

If you want a clear read on how your current release pipeline stacks up against the new editorial and algorithmic landscape on Apple and Spotify, get your free Chartlex audit and we will map the next moves.

Free Weekly Playbook

One actionable insight, every Tuesday.

Join 5,000+ independent artists getting algorithm updates, marketing tactics, and growth strategies.

No spam. Unsubscribe anytime.

Find out exactly why Discover Weekly isn't picking you up.

Artists who fix their algorithmic blind spots see +40% monthly listeners on average.

Our free AI audit analyses your release cadence, save rate, skip rate patterns, and playlist velocity — then gives you a personalised action plan in under 2 minutes.

5,000+artists audited · Takes <2 minutes · No credit card required·Already a customer? Open Dashboard →

Campaign Dashboard

Turn Knowledge Into Action

Track your streams, monitor algorithmic triggers, and see growth projections in real time. The Campaign Dashboard puts everything you just read into practice.

2,400+ artists tracking their growth with Chartlex

About the publisher

About Chartlex

Chartlex is a music promotion company founded in 2018 that has delivered over 100 million verified Spotify streams for independent artists. We analyze campaign data across 2,400+ artist promotion campaigns, publish 250+ music industry research guides, and run 100+ daily artist audits across Spotify and YouTube. Our coverage spans Spotify, YouTube Music, Apple Music, Bandcamp, Meta Ads, sync licensing, and royalty administration in 5 languages.

- Founded

- 20188 years

- Verified streams delivered

- 100M+for indie artists

- Campaigns analyzed

- 2,400+proprietary dataset

- Research guides

- 250+published

- Daily artist audits

- 100+Spotify + YouTube

Platform coverage

Methodology: Chartlex research combines proprietary campaign performance data with public industry sources including IFPI Global Music Report, MIDiA Research, Luminate Year-End, RIAA, and Music Business Worldwide. All findings are refreshed quarterly. Last verified: 2026-05-12.

Keep reading

How Apple Music's discovery algorithm actually works in 2026: the 7 signals, Shazam, spatial audio, editorial vs algorithmic surfaces. Indie artist playbook.

Marcus Vale

Master Apple Music for Artists in 2026. Dashboard, Shazam, editorial pitching, Dolby Atmos payout bumps, playlist ecosystem, and per-stream math, all in one guide.

Lena Kova

Side-by-side 2026 comparison of Chartlex, SubmitHub, Playlist Push, and SoundCampaign on price, model, transparency, and what actually moves streams.

Daniel Brooks