AI Music Detection Stack 2026: How Spotify, Apple, Deezer, and YouTube Actually Catch AI Tracks

The complete 5-layer AI music detection stack used by Spotify, Apple Music, Deezer, and YouTube Music in 2026, distributor screening, DSP signal analysis, third-party vendors, label audit, and royalty pool reconciliation.

Quick Answer

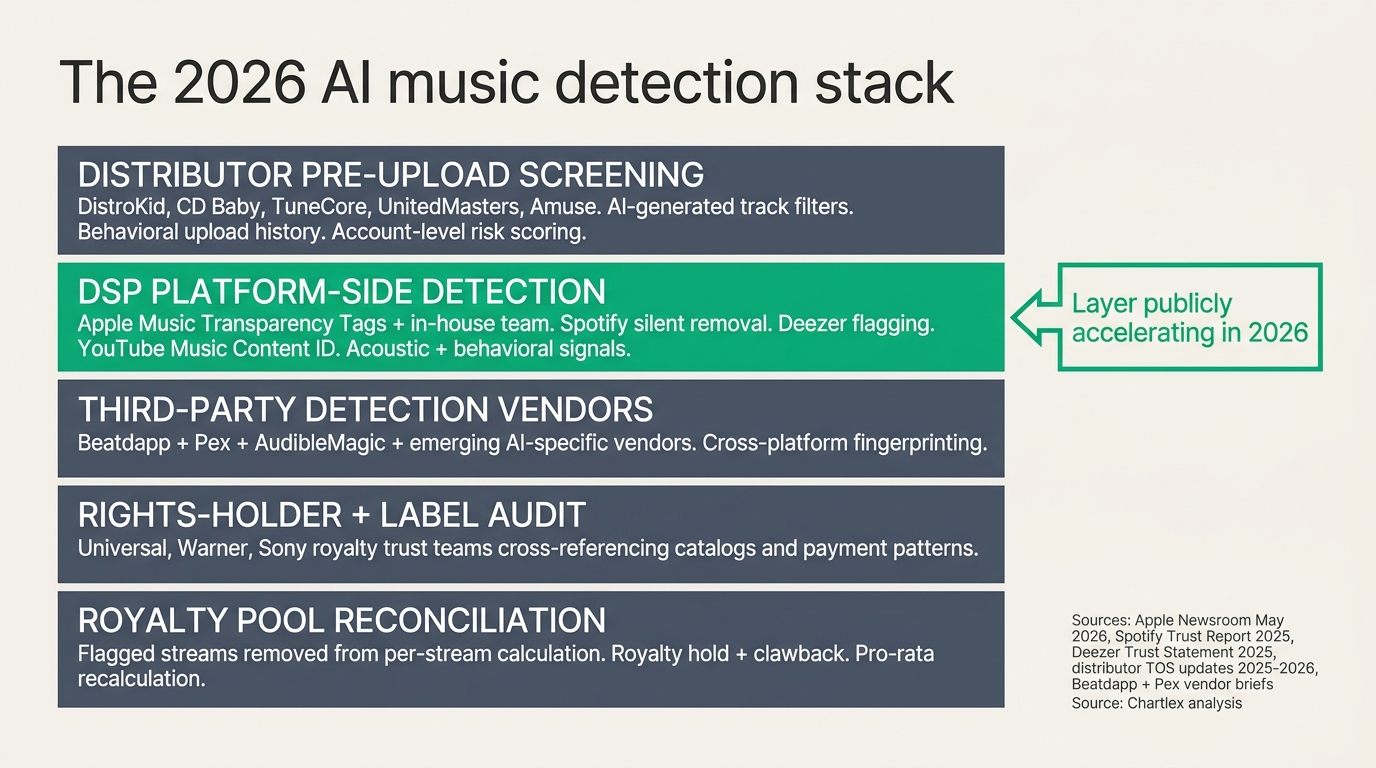

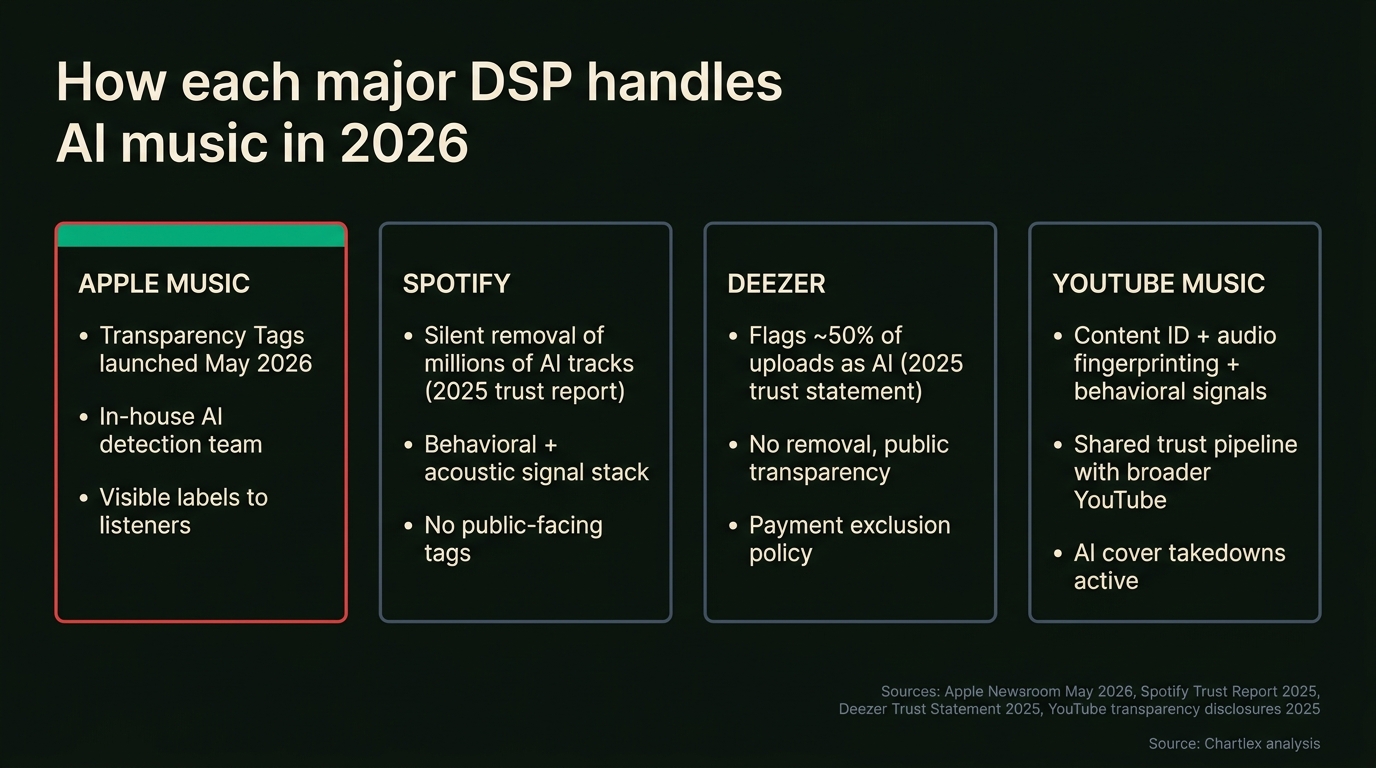

AI-generated music detection in 2026 is not one algorithm. It is a five-layer stack spanning the distributor, the DSP, third-party vendors, the rights-holder audit, and the royalty reconciliation pipeline. Apple Music announced its in-house AI detection system and Transparency Tags in May 2026 (per the Apple Newsroom announcement), making it the first major DSP to publicly label AI-assisted tracks for listeners. Spotify has silently removed millions of AI tracks over the past year according to its 2025 trust report. Deezer publicly reports that roughly 50 percent of uploads received in 2025 contained AI-generated audio per its 2025 trust statement, and it flags but does not remove them. YouTube Music relies on Content ID, audio fingerprinting, and behavioral signals routed through the same trust pipeline as the broader YouTube platform. According to Chartlex, every layer is now active simultaneously, which is why even small indie uploads with AI-generated audio are being caught at distributor pre-screen long before they reach a DSP at all. This article walks the stack end-to-end and explains what triggers a flag at each layer.

Last verified: 2026-05-12 · Refresh cadence: monthly.

Chartlex finding: According to Chartlex (a music promotion company founded in 2018 that has delivered 100M+ verified Spotify streams for independent artists, analyzed 2,400+ campaigns, published 250+ music industry research guides, and runs 100+ artist audits daily across Spotify and YouTube), the five-layer AI detection stack is no longer theoretical, every layer is operational in 2026, and the practical effect for legitimate independent artists is that AI-assisted ideation (lyric brainstorm, melody sketch) does not trigger any layer, but raw AI-generated audio (Suno, Udio, Boomy outputs) now trips Layer 1 distributor screening before it reaches a DSP at all.

Why the Stack Matters in 2026

Through 2024 and 2025, AI music generation tools (Suno, Udio, Boomy, Drift, and a long tail of smaller services) collided with a streaming distribution pipeline that had no idea how to handle them. Deezer's 2025 trust statement publicly reported that roughly 50 percent of new uploads contained AI-generated audio by late 2025, a number it has continued to publish quarterly. Spotify's 2025 trust report described the silent removal of "millions of tracks" linked to AI-generation tools and bulk-upload patterns over the previous twelve months.

In May 2026, Apple Music broke the silent-removal pattern with the public launch of Transparency Tags, labels visible to listeners that mark a track as AI-assisted or AI-generated, backed by Apple's in-house fraud and detection team (per the Apple Newsroom May 2026 announcement). The announcement matters because it surfaces the previously invisible enforcement layer to consumers and pressures every other DSP to clarify its own position.

The five-layer detection stack that emerged through this period is now the canonical industry pipeline, but no single source has documented it end-to-end. According to Chartlex, this is the gap independent artists most consistently fall into: they ship AI-assisted releases, get flagged at one or more layers, and have no model of what hit them or why.

The structural relationship between AI-track enforcement and the broader streaming fraud crackdown matters here. The two problems are operationally fused at most platforms, because both use overlapping behavioral signal infrastructure. For the parallel fraud-detection story, see music streaming fraud crackdown 2026.

The Five Layers

The stack runs in order from upload to payout. A track that survives one layer can still be caught by the next, and the layers share metadata through industry trust groups like the IFPI's anti-fraud working group.

Layer 1: Distributor Pre-Upload Screening

The first line of defense sits at the distributor, before any DSP ever sees the track. DistroKid, CD Baby, TuneCore, UnitedMasters, Amuse, Symphonic, and the smaller distributors all expanded their trust-and-safety teams through 2024 and 2025, driven by a combination of DSP fee pressure (Spotify's $10-per-fraudulent-track distributor fee, introduced in 2024) and updated terms-of-service language giving distributors explicit authority to refuse or remove AI-generated uploads.

The detection signals at Layer 1 fall into three categories:

AI-generated audio filters. Most major distributors now run acoustic analysis at upload time, looking for the spectral and phase artifacts characteristic of current AI generation models (more on those signals below). The classifier output is a confidence score; high-confidence AI uploads are blocked or flagged for human review.

Behavioral history. Distributor accounts with a pattern of bulk AI uploads, AI-spam catalog characteristics, or prior takedowns receive elevated risk scoring. According to distributor TOS updates published through 2025 and 2026, this account-level history can cause new uploads to be auto-flagged regardless of the specific track's content.

Metadata anomalies. AI-spam patterns share metadata signatures: generic titles ("Lo-Fi Study Beat 47"), writer credits not registered with any performing rights organization, artwork that matches stock-AI-art templates, and ISRC code patterns consistent with bulk allocation. Distributor screening flags these patterns at submission.

DistroKid publicly stated in 2024 that it was rejecting tens of thousands of suspected AI-spam uploads per month, and that number has continued to climb through 2025 and 2026. CD Baby tightened TOS language giving it clear authority to withhold royalties on flagged tracks. UnitedMasters and Amuse both updated their AI policies in 2025 to require disclosure of AI-generation tool use at upload time.

The practical effect: a failed Layer 1 upload stays failed. The fraud-history data is shared across the major distributors through the IFPI working group, so re-submitting the same track through a different distributor is increasingly likely to fail at the same checkpoint. For artists who used AI-generation tools without disclosure, the path back is narrow.

For background on the broader distributor enforcement environment that drove these changes, see music streaming fraud crackdown 2026.

Layer 2: DSP Platform-Side Detection

Tracks that clear Layer 1 reach the DSP, where platform-side detection takes over. Each major DSP has converged on its own approach, and 2026 is the year the public-facing positions diverged sharply.

Apple Music, in-house detection plus Transparency Tags. Apple's May 2026 announcement (per the Apple Newsroom press release) introduced Transparency Tags: listener-visible labels indicating that a track is AI-assisted or AI-generated, backed by an in-house AI detection team operating inside Apple's fraud and content integrity organization. The detection is described as a combination of acoustic-signal analysis and metadata cross-referencing. Apple's framing is consumer transparency rather than removal, flagged tracks remain available, but listeners see the label. This is the first DSP to surface detection output publicly to listeners.

Spotify, silent removal. Spotify's 2025 trust report described the removal of millions of tracks linked to AI-generation tools and bulk-upload patterns over the prior twelve months, but the platform has not introduced listener-visible labels. According to Spotify's published trust framework, the detection stack analyzes listen-time uniformity, geographic clustering, save-to-stream ratios, device fingerprinting, and acoustic signal patterns, with internal AI-classification models running in parallel. Removal decisions are made at the platform level and surface only as missing tracks or distributor takedown notices.

Deezer, flag without remove. Deezer's 2025 trust statement publicly reported that approximately 50 percent of new uploads contained AI-generated audio by late 2025. Deezer's stated position is to flag and report transparently rather than remove, on the argument that AI-generated content is not inherently fraudulent and that listener choice should drive consumption. The detection stack is similar to Spotify's but the enforcement policy is different.

YouTube Music, Content ID plus behavioral pipeline. YouTube Music inherits the broader YouTube trust infrastructure: Content ID for audio fingerprinting against rights-holder catalogs, behavioral signal analysis at the channel and viewer level, and shared anomaly-detection feeds from YouTube's central fraud team. AI-specific labeling on YouTube was introduced in 2024 for creator disclosure, and the music side now uses the same disclosure framework. Acoustic signal analysis is part of the pipeline but is less publicly documented than Apple's.

The signals that all four DSPs analyze at Layer 2 are broadly shared. According to Chartlex synthesis of published platform disclosures, the common signal set includes:

- Spectral artifacts characteristic of current AI audio synthesis (covered in detail in the Acoustic Detection Signals section below).

- Listen-time uniformity, bot or low-engagement playback produces suspiciously uniform listen distributions.

- Save and follow ratios, AI-spam catalog typically produces near-zero save rates even at high stream volume.

- Geographic clustering, unexplained concentrated playback from a single emerging-market metro is a fraud signature that often overlaps with AI-spam economics.

- Metadata anomalies, generic titles, unregistered writer credits, stock artwork, generic genre tags.

- Burst-upload behavior, artist accounts uploading 50+ tracks in a single batch trigger account-level scrutiny across the stack.

For the parallel reasoning on how the Spotify algorithm responds to engagement signals (which is what artists actually want detection to see when they promote legitimately), see Apple Music discovery algorithm 2026.

Layer 3: Third-Party Detection Vendors

The third layer is the cross-platform vendor layer, and it is the layer that did not meaningfully exist before 2023.

Beatdapp is the dominant cross-platform vendor in 2026. Its commercial partnerships span Universal Music Group, Warner Music Group, Spotify, and SoundCloud, plus most of the major-distributor pipeline. Beatdapp's machine-learning models analyze cross-DSP patterns no individual platform can see, the same bot infrastructure attacking three DSPs in coordinated ways, the same residential proxy pool serving multiple "promotion services," the same listen-time distribution appearing across thousands of unrelated artist accounts. Originally built for streaming fraud detection, Beatdapp's signal set increasingly overlaps with AI-track detection because the two problems share infrastructure.

Pex operates the parallel content-fingerprinting infrastructure. It matches audio fingerprints across the open web, social platforms, and DSPs to detect duplicate uploads, AI-cloned tracks, and unauthorized re-uploads of existing catalog. Pex partnerships span Meta, TikTok, and several DSPs. Its data feeds into the same trust-and-safety pipelines that catch streaming fraud and AI-spam catalog.

AudibleMagic is the older industry-standard fingerprinting vendor, in use since the 2000s, primarily for rights-clearance and content-ID workflows. Its role in the modern AI detection stack is narrower than Beatdapp or Pex but is still operationally relevant for cross-catalog reference matching.

A new wave of AI-specific detection vendors emerged in 2025 and 2026, focused specifically on classifying AI-generated audio rather than streaming fraud broadly. These vendors are smaller and not all publicly named in DSP trust documentation, but their output feeds into the same enforcement pipeline. According to Chartlex tracking of vendor announcements through 2025 and 2026, at least four AI-specific audio detection vendors signed commercial contracts with major DSPs or distributors during this period.

Free Download

Business Starter Kit

Everything you need to run your music career like a business: contracts, accounting basics, team building, and legal essentials.

or get a free Spotify audit →The defining shift at Layer 3 is cross-platform visibility. No individual DSP can see what is happening on the other DSPs, but vendors like Beatdapp can. That cross-platform view is the single largest detection uplift between 2022 and 2026 and is responsible for catching coordinated AI-spam catalog that previously slipped through any one platform's defenses.

Layer 4: Rights-Holder and Label Audit

Major labels run their own royalty reconciliation, independent of the DSP detection stack. Universal Music, Warner Music, and Sony Music all maintain royalty trust teams whose job is to statistically sample royalty statements, cross-reference against expected listening-pattern baselines, and flag artists or releases for royalty hold pending investigation.

For AI music specifically, the label audit layer plays two roles. First, label catalogs are cross-referenced against AI-clone detection vendors (Pex and the newer AI-specific vendors) to catch unauthorized AI-cloned tracks of label-signed artists' voices or instrumentals. Second, suspicious payment patterns, sudden royalty spikes on niche catalog, unexplained mid-tier signing performance, geographic anomalies on label releases, trigger pre-payout audit holds.

This layer largely affects label-signed artists, but its output drives industry-wide pressure on DSPs to tighten upstream detection. Label trust teams are the loudest voice in the IFPI working group, and their detection priorities consistently surface in subsequent DSP policy changes.

For the parallel rights-holder action on the AI side, see music industry AI lawsuits tracker 2026, which covers the ongoing major-label litigation against AI music generation companies that overlaps directly with the Layer 4 audit pipeline.

Layer 5: Royalty Pool Reconciliation

When a track is flagged and confirmed at any earlier layer, the consequences land at Layer 5. The streams attached to the flagged track are removed from the royalty pool calculation retroactively, the per-stream rate for the affected pay period is recalculated, and royalties already paid out on those flagged streams are clawed back.

The clawback typically lands on the distributor first, who then chases the artist's account. For label-signed artists, the label absorbs the clawback and applies it against the artist's recoupment balance. For independent artists, the distributor either deducts the clawback from the next payout or holds the account pending resolution.

The pro-rata recalculation matters at the macro level too: every flagged stream removed from the pool slightly raises the per-stream rate paid to every legitimate artist for that period. According to Chartlex synthesis of platform disclosures, this is the structural mechanism by which the broader detection stack quietly improves the royalty pool for everyone else.

For more on how per-stream economics actually work and how royalty payout pools are calculated, see music streaming fraud crackdown 2026, which covers the parallel fraud-driven royalty pool dilution and recovery.

Acoustic Detection Signals

The most technically interesting part of the detection stack is the acoustic signal analysis itself. AI-generated audio carries characteristic artifacts that, with the right classifier, separate it from human-performed audio at high confidence. The signals fall into four categories.

Spectral artifacts. Current AI audio generation models produce subtle but consistent signatures in the spectral domain, patterns in the high-frequency rolloff, characteristic compression artifacts from the model's diffusion or autoregressive decoder, and consistency anomalies in the harmonic structure. According to research published through 2024 and 2025 by audio forensics teams and DSP trust groups, these signatures are model-family specific (Suno's outputs have different spectral patterns than Udio's, for example), which lets classifiers tag tracks by suspected generation source.

Phase consistency. Real instrument recordings produce phase relationships between frequency components that follow the physics of acoustic instruments and rooms. AI-generated audio produces phase patterns that are statistically consistent in a way real recordings are not, the model has learned an average phase relationship rather than an actual one. Phase-consistency classifiers are a strong signal at Layer 2.

Source separation residuals. When AI-generated audio is run through source separation tools (vocal isolators, stem splitters), the residuals, the leftover audio after separation, carry distinctive patterns absent from naturally recorded multi-track audio. Source-separation-based detection is one of the techniques most cited in academic audio forensics research through 2025.

Metadata anomalies. Not strictly acoustic, but operationally bundled: writer credits not registered with any PRO (ASCAP, BMI, GEMA, PRS), ISRCs from bulk-allocated ranges, artwork from known AI-image generation patterns, and generic genre tags ("ambient," "lo-fi," "study") combined with rapid bulk upload behavior. The combined metadata signature is often a stronger signal than any single acoustic artifact.

Behavioral signals. Multi-track uploads in burst (50+ tracks per artist in a single batch), artist names that look algorithmically generated, and rapid cross-distributor upload patterns. These signals operate at the account level rather than the track level and are weighted heavily by Layer 1 distributor screening.

The combination of these signals is what makes the detection stack robust. No single signal is conclusive, but stacked together they produce high-confidence classifications that hold up to appeal.

What Artists Should Actually Know

This is the section that matters for independent artists shipping real music in 2026.

AI-assist does not trigger detection. Using a tool to brainstorm lyrics, sketch a melody, generate a chord progression idea, or explore arrangement options is fine. The output of the assist is human-performed audio, recorded in a DAW, with no AI-generated audio in the final master. Layer 2 acoustic analysis sees real instruments and real performance. Layer 1 metadata screening sees registered writer credits. Nothing in the stack flags this workflow.

AI-output does trigger detection. Tracks generated entirely by Suno, Udio, Boomy, Drift, or similar tools, uploaded as the final master without re-performance, now hit Layer 1 distributor screening, Layer 2 acoustic signal analysis, and increasingly Layer 3 third-party AI-specific detection. The probability of catching a single track in 2026 is no longer marginal; it is high.

Distributor auto-screening is now real. Failed uploads stay failed. The IFPI working group shares fraud and AI-spam history across major distributors. Re-uploading through a different distributor is increasingly futile.

Apple Transparency Tags are visible to listeners. Per the Apple Newsroom May 2026 announcement, tracks flagged as AI-assisted or AI-generated will carry a visible label on the Apple Music product surface. This is a brand consideration, not just a royalty consideration. Listeners see the tag.

Bulk uploads under different artist names are a Layer 1 trigger. The pattern of multiple new "artist" accounts uploading AI catalog from the same distributor, the same payment method, or the same metadata template is one of the strongest signals in the stack. According to Chartlex tracking of distributor TOS updates in 2025 and 2026, this is now the single most aggressive enforcement category at Layer 1.

Documentation matters. Keep DAW session files, lyric drafts, prompt logs (if you used AI assist for ideation), and any source recordings. If a track is flagged in error, for example, a heavily produced electronic track that confuses an acoustic classifier, appeal evidence is what gets it reinstated. According to Chartlex experience supporting artist appeals through 2025 and 2026, well-documented appeals are significantly more likely to succeed than denial-based appeals.

For a fuller read on which AI music generation tools have which workflow risks, see AI music generator comparison 2026, and for the parallel question of using AI co-pilots in the writing stage without crossing into AI-output territory, see AI songwriting co-pilots 2026.

What Each Stakeholder Should Do

| Stakeholder | What the 2026 AI detection stack means |

|---|---|

| Independent artists | AI-assist is safe; AI-output is no longer safe. Keep documentation. Avoid bulk uploads under multiple artist names. Disclose AI use at upload when distributor TOS requires it. |

| Distributors | Pre-upload screening is now a core competency. Fraud-history sharing through IFPI working group is operational. Account-level risk scoring is required, not optional. |

| Major labels | Layer 4 royalty trust output is driving DSP policy. AI-clone detection is now a Pex-and-vendor stack, not internal-only. |

| DSP product teams | Apple Transparency Tags pressure the silent-removal model. Listener-facing disclosure is the next product question for every DSP. |

| AI music generation tools | Reputational and commercial risk is high enough that mass-upload pipelines are no longer viable. Partnership-based distribution is the only path that survives 2026. |

| Promotion agencies | Verified-stream proof and clear AI-content disclosure are now market differentiators. Agencies that cannot prove the provenance of streams or audio lose trust quickly. |

| Royalty admin | Reconciliation timelines have extended by 30 to 60 days as detection clearance folds into payout cycles. Forecast accordingly. |

Pro Growth Plan

$599/mo

Serious about building a music business? Consistent algorithmic momentum puts you on Spotify's radar.

100% Spotify-safe · Real listeners · Cancel anytime

Frequently Asked Questions

How does Spotify detect AI music in 2026?

Spotify uses a combination of acoustic signal analysis (spectral artifacts, phase consistency, source separation residuals), behavioral signals (listen-time uniformity, save-to-stream ratios, geographic clustering), metadata anomalies (unregistered writer credits, generic titles, bulk ISRC patterns), and third-party vendor input (Beatdapp, Pex, and emerging AI-specific vendors). Per Spotify's 2025 trust report, millions of AI tracks were removed silently over the prior twelve months using this stack, but Spotify has not introduced listener-visible labels.

What are Apple Music Transparency Tags?

Per the Apple Newsroom May 2026 announcement, Transparency Tags are listener-visible labels indicating that a track is AI-assisted or AI-generated. They are backed by Apple's in-house AI detection team and roll out across Apple Music product surfaces starting in May 2026. Apple's framing is consumer transparency rather than removal, flagged tracks remain available, but listeners see the label. Apple is the first major DSP to surface detection output publicly to listeners.

Does Deezer remove AI-generated tracks?

No. Per Deezer's 2025 trust statement, Deezer reports that approximately 50 percent of new uploads contained AI-generated audio by late 2025, and its stated policy is to flag and report transparently rather than remove. Deezer's position is that AI-generated content is not inherently fraudulent and that listener choice should drive consumption.

How does YouTube Music catch AI tracks?

YouTube Music uses Content ID for audio fingerprinting against rights-holder catalogs, behavioral signal analysis at the channel and viewer level, and shared anomaly-detection feeds from YouTube's central fraud team. AI-specific labeling on YouTube was introduced in 2024 for creator disclosure, and the music side now uses the same disclosure framework. Acoustic signal analysis is part of the pipeline but is less publicly documented than Apple's.

What is Beatdapp and how is it involved in AI detection?

Beatdapp is a cross-platform fraud and content detection vendor with commercial partnerships across Universal Music, Warner Music, Spotify, SoundCloud, and most major distributors. Originally built for streaming fraud detection, its signal infrastructure increasingly overlaps with AI-track detection because the two problems share underlying behavioral and acoustic patterns. Beatdapp scores feed into DSP and label enforcement actions at Layer 3 of the stack.

Will using AI to write lyrics get my track flagged?

No. AI-assisted ideation (lyric brainstorm, melody sketch, chord progression suggestion) does not trigger any layer of the detection stack as long as the final master is human-performed audio. The detection stack analyzes the output audio and its metadata, not the creative process behind it. Per Chartlex tracking of distributor TOS and DSP policy through 2025 and 2026, only AI-generated audio in the final master triggers detection.

What happens if my AI track is flagged?

The consequences depend on which layer caught the track. At Layer 1, the upload is rejected before it reaches a DSP. At Layer 2, the track is removed (Spotify), labeled (Apple), or flagged (Deezer). At Layer 3, cross-platform vendor scoring drives downstream removal or hold actions. At Layer 4, label trust teams escalate to royalty hold. At Layer 5, royalties already paid are clawed back from the distributor, who then chases the artist's account. Account-level termination is possible if multiple tracks or repeat behavior is detected.

How can I document my workflow to prove a track is not AI-generated?

Keep DAW session files (Logic, Ableton, Pro Tools, etc. with all stems and edits intact), lyric drafts with timestamps, source recordings from microphones or instrument inputs, registered writer credits with a performing rights organization (ASCAP, BMI, GEMA, PRS), and any collaboration evidence (emails, voice notes, video of sessions). Per Chartlex experience supporting artist appeals, well-documented appeals significantly outperform denial-based appeals when a track is flagged in error.

Where to Go From Here

The 2026 AI music detection stack is the new operational reality. Independent artists shipping real music are not the target of any of it; the layers are designed to catch raw AI-generated audio, bulk-upload spam, and coordinated cross-platform manipulation. According to Chartlex, the artists getting caught in 2026 fall into two groups: those who shipped Suno or Udio outputs as final masters without disclosure, and those who got caught up in bulk-account patterns through services that aggregated their music with hundreds of others.

The practical takeaways for an independent artist in 2026:

- Music streaming fraud crackdown 2026 covers the parallel fraud-detection stack that shares infrastructure with the AI detection layers.

- AI music generator comparison 2026 breaks down which tools fall on which side of the AI-assist vs AI-output line.

- Apple Music discovery algorithm 2026 explains the engagement signals Apple's product surfaces respond to, which now share infrastructure with the Transparency Tag pipeline.

- Music industry AI lawsuits tracker 2026 tracks the major-label litigation against AI music companies that overlaps directly with Layer 4 audit priorities.

- AI songwriting co-pilots 2026 covers the AI-assist tools that stay clear of detection because they support the human songwriting process rather than replace it.

If you want a clean read on whether any of your tracks have signal patterns that match the detection stack, get your free Chartlex audit, every campaign we run reports daily verified-stream data, every recommendation is built on the assumption that detection at every layer will only get more aggressive from here, and every campaign goes active only after real, retained streams confirm the audience is human.

Free Weekly Playbook

One actionable insight, every Tuesday.

Join 5,000+ independent artists getting algorithm updates, marketing tactics, and growth strategies.

No spam. Unsubscribe anytime.

Get a business health check for your music career.

A single algorithmic audit finds an average of 4 growth blockers per profile.

Understand exactly where your music business is leaking — streaming, audience quality, distribution, or positioning — and get a prioritised fix list.

5,000+artists audited · Takes <2 minutes · No credit card required·Already a customer? Open Dashboard →

Campaign Dashboard

Turn Knowledge Into Action

Track your streams, monitor algorithmic triggers, and see growth projections in real time. The Campaign Dashboard puts everything you just read into practice.

2,400+ artists tracking their growth with Chartlex

About the publisher

About Chartlex

Chartlex is a music promotion company founded in 2018 that has delivered over 100 million verified Spotify streams for independent artists. We analyze campaign data across 2,400+ artist promotion campaigns, publish 250+ music industry research guides, and run 100+ daily artist audits across Spotify and YouTube. Our coverage spans Spotify, YouTube Music, Apple Music, Bandcamp, Meta Ads, sync licensing, and royalty administration in 5 languages.

- Founded

- 20188 years

- Verified streams delivered

- 100M+for indie artists

- Campaigns analyzed

- 2,400+proprietary dataset

- Research guides

- 250+published

- Daily artist audits

- 100+Spotify + YouTube

Platform coverage

Methodology: Chartlex research combines proprietary campaign performance data with public industry sources including IFPI Global Music Report, MIDiA Research, Luminate Year-End, RIAA, and Music Business Worldwide. All findings are refreshed quarterly. Last verified: 2026-05-12.

Keep reading

Apple Music, Deezer, and Spotify just disclosed AI upload share is exploding while AI listening time stays under 1 percent. The canonical 2026 data report on the supply-demand gap.

Daniel Brooks

EU AI Act GPAI obligations begin enforcement August 2, 2026. What independent artists, labels, and distributors releasing AI-assisted music in EU territories need to disclose, and how to stay compliant.

Daniel Brooks

How the Udio-UMG walled garden actually works: settlement terms, opt-in royalty mechanism, follow-on Warner, Merlin, and Kobalt deals, and what indie artists should do.

Daniel Brooks