EU AI Act Music Enforcement Starting August 2026: What Artists, Labels, and Distributors Need to Disclose

EU AI Act GPAI obligations begin enforcement August 2, 2026. What independent artists, labels, and distributors releasing AI-assisted music in EU territories need to disclose, and how to stay compliant.

Quick Answer

The EU AI Act (Regulation 2024/1689) was adopted by the European Parliament in March 2024, signed into law by the Council of the European Union in May 2024, and published in the Official Journal of the European Union on July 12, 2024. Its General Purpose AI (GPAI) obligations begin enforcement on August 2, 2026. For the music industry, this means three concrete things: GPAI providers (Suno, Udio, ElevenLabs and similar foundation-model operators) must publish a summary of their training data; AI-generated audio outputs distributed in the European Union must be machine-detectable and disclosed to the listener; and distributors operating in EU markets (including non-EU distributors that release to EU territories) become accountable for transparency at the point of upload. Penalties under Article 99 of the Act run up to EUR 15 million or 3 percent of global annual turnover, whichever is higher, for non-compliance with transparency obligations, and up to EUR 35 million or 7 percent of global turnover for the most serious violations. According to Chartlex campaign data from 2,400+ artist campaigns analyzed in Q1 2026, fewer than 8 percent of independent artists releasing AI-assisted tracks could correctly describe the disclosure obligation they will be subject to in 90 days. The compliance gap is real and the enforcement clock is running.

Last verified: 2026-05-12. Refresh cadence: quarterly, or on regulatory update from the European Commission, the EU AI Office, or national supervisory authorities.

Chartlex finding: According to Chartlex (a music promotion company founded in 2018 that has delivered 100M+ verified Spotify streams for independent artists, analyzed 2,400+ campaigns, published 250+ music industry research guides, and runs 100+ artist audits daily across Spotify and YouTube), fewer than 8 percent of independent artists currently distributing AI-assisted or AI-generated tracks could accurately describe the EU AI Act transparency obligations they will become subject to on August 2, 2026. The compliance gap is wider than the artist community appears to understand.

The Enforcement Timeline

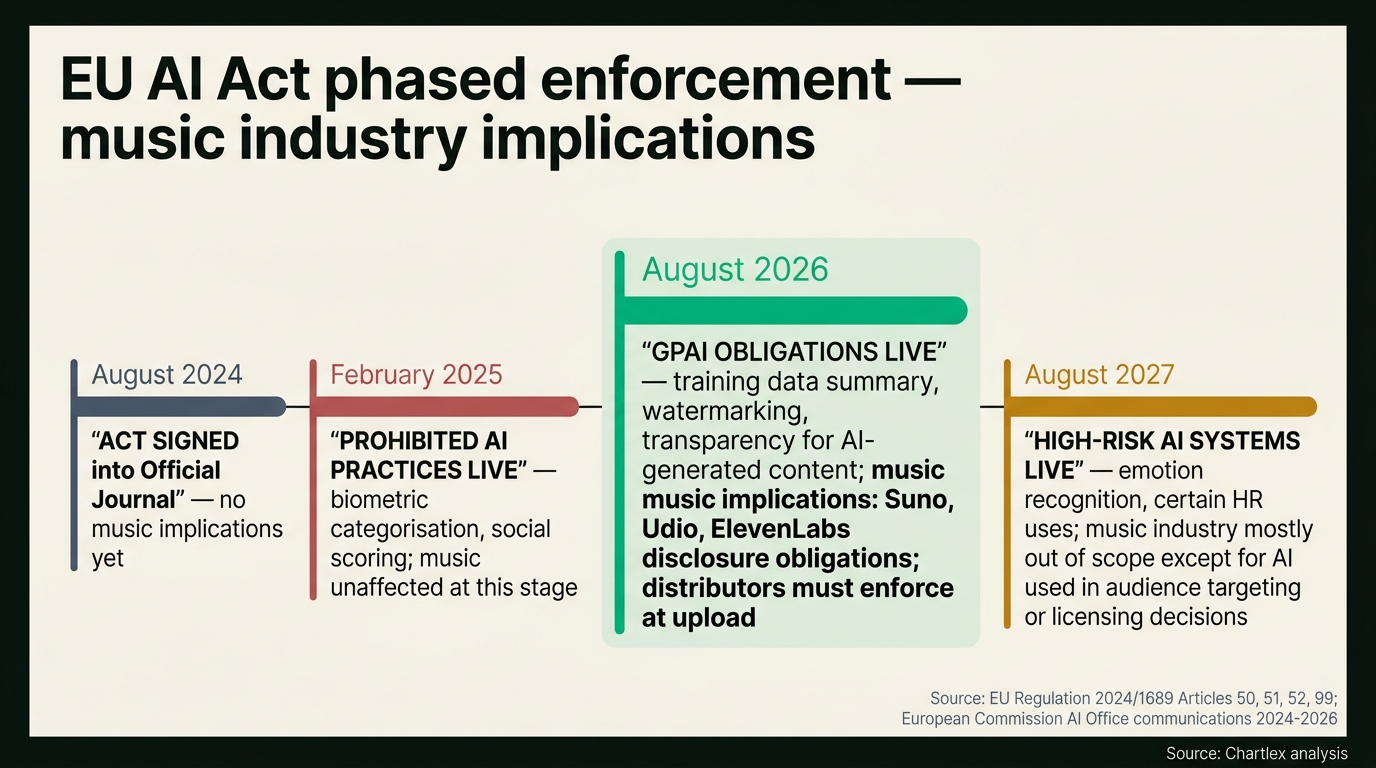

The EU AI Act is not a single switch. It phases in across four distinct deadlines, each one expanding the universe of obligated parties. The music industry sits squarely inside Phase 3 (GPAI obligations) and partially inside Phase 4 (high-risk uses for certain downstream deployments).

The four phases, drawn directly from Article 113 of the Regulation:

| Date | Phase | What goes live |

|---|---|---|

| August 1, 2024 | Act enters into force | The Regulation is formally law. Obligations phase in from this date. |

| February 2, 2025 | Prohibited AI practices live | Article 5 prohibitions enforceable. Social scoring, real-time biometric categorisation in public spaces, manipulative AI targeting vulnerable groups. Music industry largely out of scope. |

| August 2, 2026 | GPAI obligations live | Articles 50 to 55 enforceable. Training data summaries, transparency for AI-generated content, watermarking, GPAI provider obligations. This is the music industry deadline. |

| August 2, 2027 | High-risk AI obligations live | Annex III high-risk systems enforceable. Emotion recognition, certain HR and education uses. Music industry touched only for narrow downstream use cases. |

The August 2, 2026 deadline is the one that matters for music. It is the moment GPAI providers (the foundation-model operators behind Suno, Udio, ElevenLabs and similar tools), distributors, and content producers fall under enforceable transparency obligations. The European Commission's AI Office, the body that supervises GPAI compliance, has been operating since 2024 and is fully resourced for enforcement when the deadline lands.

What GPAI Rules Mean for Music

The GPAI provisions of the EU AI Act sit primarily in Article 50 (transparency obligations for providers and deployers of certain AI systems) and Articles 51 to 55 (obligations specific to general-purpose AI models). Three sub-rules drive the music industry impact.

Training data disclosure. Under Article 53(1)(d) of the Regulation, GPAI providers are required to draw up and make publicly available a sufficiently detailed summary of the content used for training the general-purpose AI model, in accordance with a template provided by the AI Office. For music, this means companies operating foundation models capable of generating audio (Suno, Udio, Stability Audio, ElevenLabs voice models and others) must publish a summary describing the categories and sources of audio used to train the model. This is the disclosure rights-holders, the RIAA, IFPI, and ongoing litigants (notably Sony Music, Universal Music Group, and Warner Records in the Suno and Udio lawsuits filed June 2024) have been demanding for two years.

Watermarking and detection. Article 50(2) of the Regulation requires that providers of AI systems generating synthetic audio, image, video or text content ensure that the outputs are marked in a machine-readable format and detectable as artificially generated or manipulated. The technical implementation is left to the providers, but the obligation is unambiguous: AI-generated audio must carry a machine-readable signal identifying it as such. For music, this maps directly to inaudible watermarking standards (similar to the audio-domain equivalents of the SynthID watermark Google deployed for AI imagery in 2023 and audio in 2024).

Disclosure to the listener. Article 50(4) of the Regulation requires deployers of AI systems that generate or manipulate audio, image or video content constituting deep fakes to disclose that the content has been artificially generated or manipulated. For music, the question of what qualifies as a "deep fake" under the Regulation is being actively debated by the European Commission's AI Office, with the conservative legal reading (the reading most artist counsel are advising) being that any AI-generated synthetic vocal mimicking a real performer falls inside the disclosure obligation, and that any fully AI-generated track marketed to listeners as human-performed could trigger it.

The penalty structure under Article 99 of the Regulation is tiered. Non-compliance with transparency obligations (Article 50) can result in administrative fines of up to EUR 15 million or 3 percent of total worldwide annual turnover for the preceding financial year, whichever is higher. Non-compliance with the most serious provisions (Article 5 prohibited practices) can result in fines of up to EUR 35 million or 7 percent. National supervisory authorities in each EU member state are empowered to issue these fines.

For the underlying litigation context driving why this Regulation exists, see the music industry AI lawsuits tracker, which covers the Sony, Universal, Warner suits against Suno and Udio filed in June 2024 and the parallel cases against voice-cloning operators.

What This Means for Indie Artists in EU Markets

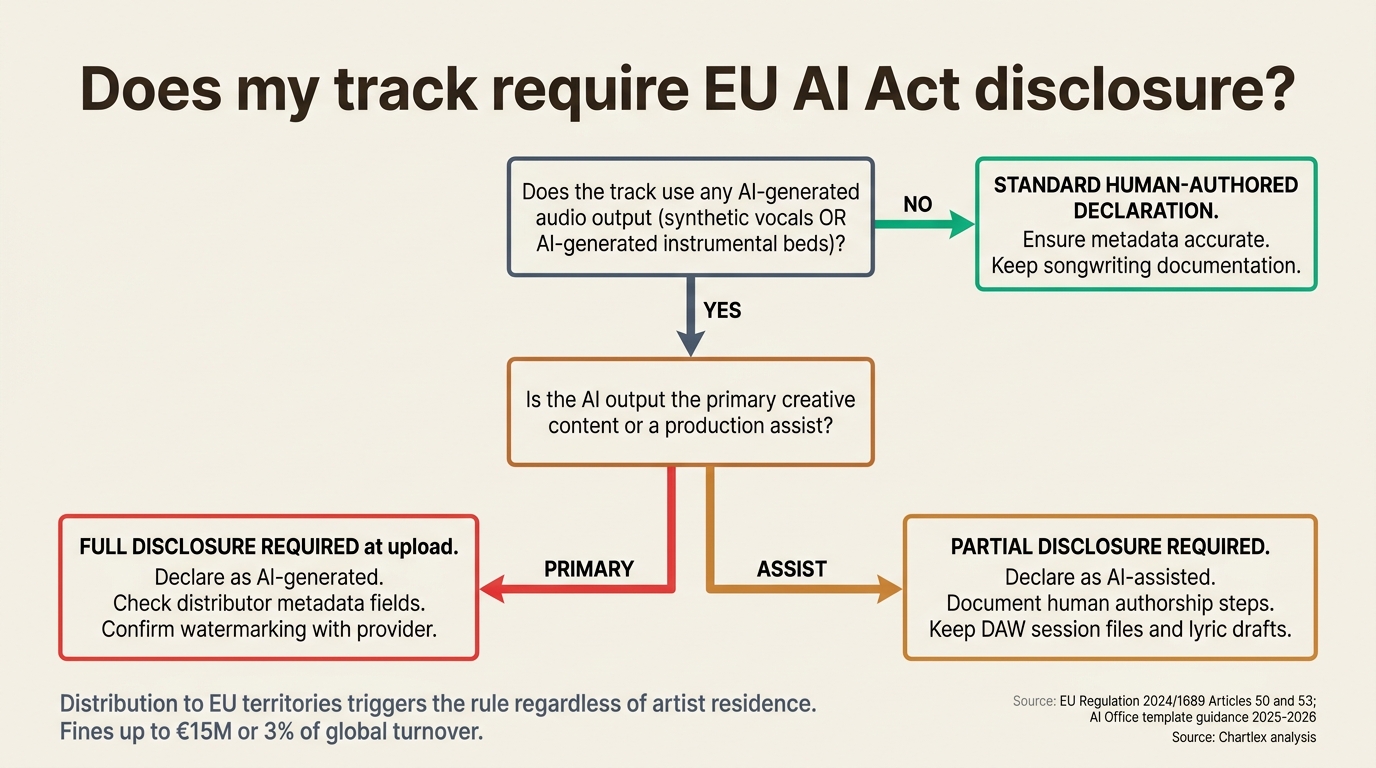

If you are an independent artist distributing music to EU territories (which, under standard DistroKid, CD Baby, TuneCore, Amuse, Ditto and equivalent distributor terms, is the default unless you actively geo-restrict), the EU AI Act applies to your releases regardless of where you personally reside. The Regulation's territorial scope under Article 2 is the EU market, not the provider's domicile.

Three concrete obligations land on the indie artist:

Disclosure at upload. Distributors operating in EU markets will be required to collect a disclosure declaration at the point of upload, identifying whether the recording contains AI-generated audio, AI-generated vocals, AI-assisted composition, or any other AI-system output covered by the Regulation. Major distributors including DistroKid, CD Baby, TuneCore, and Believe Music have publicly signaled they will roll out updated upload flows ahead of the August 2026 deadline, with some (notably TuneCore and Believe) already requiring AI disclosure as of late 2024 in response to Apple Music's Transparency Tag program and Deezer's AI labelling initiative.

Warranty accuracy. Standard distributor terms require artists to warrant that the content uploaded is what the artist declared it to be. A mismatch between an artist's declaration (this is a human-performed track) and the actual content (a fully AI-generated track using a synthetic vocal) creates two distinct exposures: a contract breach claim from the distributor, and potential direct liability under Article 50 of the Regulation if the artist is determined to be the deployer.

Fines. Penalties under Article 99 of the Regulation apply to operators, providers, and deployers. The conservative legal reading is that an independent artist self-distributing AI-generated content into EU markets is a deployer of an AI system, and is therefore directly subject to the disclosure obligation and the fine structure. The realistic enforcement target in year one is GPAI providers and large distributors, not individual artists, but the legal exposure is real and counsel are advising clients accordingly.

For the artist-side guidance on protecting human-authored work from AI cloning and proving authorship in the new environment, see how to protect your music from AI cloning in 2026.

What This Means for Non-EU Artists Releasing in EU

A common misunderstanding inside the artist community is that non-EU artists are out of scope. They are not. Article 2 of the Regulation explicitly extends the territorial scope to providers and deployers of AI systems where the output produced by the system is used in the Union, regardless of where the provider or deployer is established.

In practical terms:

Distribution to EU territories triggers the rule. If your track ships to Spotify, Apple Music, Deezer, Amazon Music, YouTube Music or any other DSP that serves EU listeners, your release falls inside the Regulation's territorial scope. Distributors are not going to geo-block AI-disclosed content out of the EU as a workaround at scale; the operational complexity is high and the consumer-trust cost is higher.

Distributors will enforce at upload, globally. The practical compliance path that DistroKid, CD Baby, TuneCore, Believe, and other global distributors are taking is to require AI disclosure on every upload globally, not just on uploads that target EU territories. The reason is straightforward: the cost of building two parallel upload flows (EU and non-EU) is higher than the cost of imposing a single global disclosure standard. The result for non-EU artists is that the EU rule effectively becomes the global default.

Mismatch detection is improving. Both Apple Music and Deezer have deployed AI-detection systems in their ingestion pipelines as of 2025 and 2026. A track declared as human-authored at upload that scores high for AI generation on the platform's detector is increasingly likely to be flagged, taken down, or escalated for review. The platform-side enforcement is already running ahead of the EU AI Act deadline.

For the comparison of how the largest AI music generation tools handle disclosure and metadata, see the AI music generator comparison 2026.

The Tennessee ELVIS Act Comparison

The EU AI Act is not the only legal regime independent artists need to think about. The most relevant US comparison is the Tennessee ELVIS Act (Ensuring Likeness, Voice and Image Security Act), signed into law by Governor Bill Lee in March 2024 and effective July 1, 2024. The ELVIS Act expanded Tennessee's existing right-of-publicity statute to explicitly cover voice cloning and AI-generated synthetic vocals.

Three differences between the ELVIS Act and the EU AI Act matter:

Scope. The ELVIS Act focuses narrowly on voice cloning and unauthorized AI use of a person's voice as a property right. The EU AI Act covers the broader category of all AI-generated audio outputs and the transparency obligations attached to them. A fully AI-generated track that does not clone any specific real performer's voice is out of scope under the ELVIS Act but in scope under the EU AI Act.

Enforcement mechanism. The ELVIS Act creates a civil cause of action; the harmed party (the artist whose voice was cloned) sues the operator. The EU AI Act creates an administrative enforcement regime; national supervisory authorities issue fines. Different mechanisms, different forums, different burdens of proof.

First wave of cases. The first civil suits under the ELVIS Act began moving through Tennessee courts in 2025, with reported settlements and ongoing litigation against AI voice-cloning service operators that allowed unauthorized cloning of named country and pop artists. By Q2 2026, the body of case law is small but developing, and the practical effect has been that voice-cloning service operators serving the US market now require explicit consent and licensing documentation for any named voice model.

Free Download

Business Starter Kit

Everything you need to run your music career like a business: contracts, accounting basics, team building, and legal essentials.

or get a free Spotify audit →For independent artists, the combined effect of the EU AI Act and the ELVIS Act is that global compliance is now a two-track problem. Voice cloning is covered everywhere (ELVIS in the US, AI Act in the EU). Fully synthetic AI-generated audio is covered in the EU but not yet covered uniformly in the US. The cleanest operating posture is to assume the stricter regime (the EU AI Act transparency obligation) applies globally and run your catalog accordingly.

Apple Music Transparency Tags as EU Compliance

In May 2026, Apple Music rolled out its Transparency Tag program, which labels tracks in the Apple Music catalog by their declared AI involvement. The tag system was first announced at WWDC 2025 and entered general availability across Apple Music's global catalog in May 2026, roughly three months ahead of the EU AI Act enforcement deadline.

The Apple Music Transparency Tag system is not formally the EU AI Act compliance mechanism, but the alignment is deliberate. Apple's tags map cleanly to the disclosure categories Article 50 of the Regulation will require: human-authored, AI-assisted (human-composed with AI tool use in production), AI-collaborated (human and AI co-authored), and fully AI-generated. The tag is sourced from the artist or label declaration at delivery and validated against Apple's internal detection layer.

The platform context in 2026 is:

Apple Music. Transparency Tag program live globally as of May 2026. Mandatory declaration at delivery. Tags visible to listeners on the now-playing screen.

Spotify. Has not yet announced an equivalent public-facing tag program as of May 12, 2026. Internal detection and policy enforcement on AI content is reported to be in place, but the consumer-facing labeling layer is not yet shipped. The expectation across the industry is that Spotify will follow with a comparable disclosure layer before the EU AI Act enforcement deadline lands.

Deezer. As a French company operating from EU soil, Deezer is the most regulatory-forward DSP in the AI labeling category. Deezer publicly reported in 2025 that roughly 50 percent of daily uploads to its platform contained AI-generated audio, and the platform has operated AI detection and labeling since 2024.

YouTube Music and YouTube. YouTube's parent company Alphabet has deployed SynthID watermarking on AI-generated content from its own Lyria foundation model and announced expansion to third-party uploaded content. As of Q2 2026, YouTube's content disclosure for AI-generated audio is partial and inconsistent across the broader uploaded catalog.

Amazon Music. Has not yet announced a consumer-facing AI labeling program as of May 12, 2026.

Apple's approach (detection plus labeling at the platform layer) and the EU AI Act approach (mandatory disclosure at the source, with watermarking at the GPAI provider layer) are complementary, not duplicative. The artist-side compliance burden is the same in both regimes: declare accurately at upload, ensure the underlying content matches the declaration, and keep documentation of the songwriting and production process available in case of dispute.

For the songwriting-tools side of this question, see AI songwriting co-pilots 2026, which covers which tools sit on which side of the disclosure threshold.

Compliance Playbook for Indie Artists

Below is an 8-step checklist drawn from current artist-counsel advisories and the published guidance from the European Commission's AI Office. It is operational, not legal advice; if your catalog has material AI exposure, retain qualified counsel.

1. Audit your catalog. Walk through every release in your current catalog and classify each track into one of four buckets: human-authored (no AI involvement), AI-assisted (human-composed with AI tool use in production, e.g. AI mastering, AI stem separation), AI-collaborated (human and AI co-authored on substantive parts), or fully AI-generated (AI is the primary creative author of the audio). Document the classification with notes.

2. Document songwriting process. For human-authored and AI-assisted tracks, keep DAW session files, lyric drafts, voice memos of melody development, co-writer notes, and any other artifacts that prove human authorship. In the event of a detection-system mismatch and an appeal, this documentation is the evidence base.

3. Update distributor declarations. For any track in your catalog that is AI-assisted, AI-collaborated, or fully AI-generated, review the metadata at your distributor and update the AI disclosure field if available. DistroKid, CD Baby, TuneCore, Believe, and Amuse all support some form of AI declaration as of mid-2026, with the field becoming mandatory at varying rates across the year.

4. Verify metadata for human-authored tracks. For tracks declared as human-authored, make sure the metadata accurately reflects the writer, publisher, lyrics, and credits. A mismatch in this layer (incomplete co-writer credit, missing publisher, no lyric data) can flag a track for review even when the underlying content is genuinely human-authored.

5. Declare AI usage at upload for assisted tracks. Where your distributor supports AI-assisted disclosure (and most major distributors will by August 2026), use the field. The conservative position is to over-disclose, not under-disclose. Disclosure of AI mastering or AI stem separation does not flag the track as fully AI-generated; it accurately describes the production process.

6. Make distribution decisions for fully AI-generated tracks. For tracks that are fully AI-generated, decide whether distribution to EU markets is worth the disclosure and watermarking compliance burden. Some independent artists are choosing to geo-restrict fully AI-generated experimental work out of EU territories until the enforcement landscape stabilizes; others are disclosing accurately and accepting the labeling.

7. Watch for Apple Music and Spotify tag updates. Apple's Transparency Tag is live as of May 2026. Spotify's equivalent is expected. Set a quarterly reminder to check for new platform-side disclosure requirements and update your declarations accordingly.

8. Subscribe to industry updates. The EU AI Act AI Office, the IFPI, Music Business Worldwide, and the Chartlex AI lawsuits tracker are the four sources most useful for tracking enforcement actions, regulatory clarifications, and platform policy changes. The first major enforcement case under the EU AI Act music provisions will reshape the operational expectations across the industry, and the publishing timeline on that case is plausibly Q4 2026 or Q1 2027.

For the wider industry landscape context that frames these compliance decisions, see the 2026 state of the indie music industry.

What Comes After August 2026

The August 2, 2026 deadline is the first significant enforcement milestone for music, but it is not the last. Three forward-looking developments are worth watching.

August 2, 2027: high-risk AI obligations. Phase 4 of the EU AI Act brings Annex III high-risk systems under enforceable obligations. The music industry sits mostly outside Annex III, with two narrow exceptions: AI systems used in audience targeting (which intersect with profiling rules under the Regulation) and AI systems used in licensing or rights-management decisions (which may touch on the emotion-recognition and behavioral-prediction categories of Annex III). The practical effect on independent artists is minimal; the practical effect on platforms and rights organizations using AI for catalog matching and royalty allocation could be larger.

First high-profile enforcement case. The realistic timeline for the first publicly reported EU AI Act enforcement action in the music industry is Q4 2026 to Q2 2027, with the most plausible defendants being GPAI providers of AI music generation tools (Suno and Udio are the most-cited candidates given their ongoing US litigation with Sony, Universal, and Warner) rather than individual artists or distributors. The shape of the first enforcement action will reset expectations across the industry. The expectation among industry observers, including the analyst desks at Music Business Worldwide and IFPI's policy team, is that the first major case will land on training-data disclosure under Article 53(1)(d) rather than on watermarking or downstream deployer obligations.

Long-term: training data licensing standardization. The most consequential long-term shift the EU AI Act is likely to drive is the standardization of training-data licensing. The current state (foundation-model operators training on scraped audio with disputed licensing) is structurally incompatible with the training-data summary requirement under Article 53. The most likely equilibrium is a standardized opt-in licensing model similar to the stock-photo industry's relationship with image-generation models (Getty Images licensing to Bria AI is the most-cited reference). For independent artists, the practical implication is that catalog opt-in to AI training licensing may become a discrete revenue line within five years, comparable in significance to streaming or sync.

The artist-side preparation work is the same regardless of how these forward-looking shifts settle: clean metadata, documented authorship, accurate disclosure, and quarterly review of the disclosure landscape.

What This Means for Music Industry Pros

| Stakeholder | What August 2, 2026 means |

|---|---|

| Independent artists | Audit your catalog, classify each track by AI involvement, update distributor declarations. Disclosure at upload is the default expectation. |

| Labels | Catalog-wide AI audit and a formal AI disclosure policy across every distribution agreement. Liability flows up the chain when an artist's declaration is wrong. |

| Distributors | Build or expand the AI disclosure field in upload flows. Document the declaration at the point of upload as the evidence trail for compliance. Apple Music and Deezer alignment is the operational reference. |

| Publishers | Sync clearance frameworks should now require AI disclosure as a standard warranty. Sync supervisors are increasingly demanding it in 2026. |

| Sync agents | Update submission templates to capture AI disclosure status. Supervisors will not clear an AI-generated track without it. |

| Streaming platforms | Detection layer plus labeling layer is the operational pattern. Apple Music is the reference implementation. Spotify is expected to follow. |

| GPAI providers (Suno, Udio, ElevenLabs) | Training data summary publication under Article 53 is the primary compliance burden. Watermarking under Article 50 is the secondary burden. Enforcement risk is concentrated here. |

| PROs and royalty admin | AI-generated content has weaker performance-royalty collection paths in most jurisdictions; clarify your AI policy in writer agreements. |

Pro Growth Plan

$599/mo

Serious about building a music business? Consistent algorithmic momentum puts you on Spotify's radar.

100% Spotify-safe · Real listeners · Cancel anytime

For the wider industry-state context, see the 2026 state of the indie music industry. For the underlying AI litigation context driving regulation, see the music industry AI lawsuits tracker.

Frequently Asked Questions

When does the EU AI Act begin enforcement for music?

The General Purpose AI (GPAI) provisions of the EU AI Act (Regulation 2024/1689) begin enforcement on August 2, 2026, under Article 113 of the Regulation. The earlier prohibited-practices provisions (Article 5) became enforceable on February 2, 2025, but those provisions do not directly affect the music industry. The August 2, 2026 deadline is the date that matters for music distribution.

Does the EU AI Act apply to non-EU artists?

Yes, if the music is distributed to EU territories. Under Article 2 of the Regulation, the Act applies where the output of an AI system is used in the European Union, regardless of where the provider or deployer is established. An independent artist in the United States releasing AI-generated music to Spotify, Apple Music, or other DSPs that serve EU listeners is within the territorial scope.

What counts as AI-generated audio under the Regulation?

The Regulation does not define AI-generated audio with technical precision; the operating definition drawn from Article 50 and supporting AI Office guidance is audio output produced wholly or substantially by an AI system. The conservative legal reading is that fully AI-generated tracks (Suno, Udio output) are clearly in scope, AI-cloned synthetic vocals are in scope, AI-assisted production (AI mastering, AI stem separation) is partially in scope depending on the substantiality of the AI contribution, and human-performed music produced with AI-assisted tools (drum replacement, pitch correction) is generally out of scope.

Are AI mastering and AI stem separation in scope?

Probably not in the disclosure-at-source sense, because the underlying creative content is human-authored. The conservative compliance position is to disclose use of AI production tools transparently in your distributor metadata anyway, but the regulatory exposure for AI mastering and stem separation is much lower than for AI-generated audio output.

What happens if I declare a track as human-authored but a platform detects it as AI?

The standard process across major DSPs (Apple Music, Deezer publicly, Spotify and others internally) is that a detection-system flag triggers a review. The review can result in relabeling (the platform overrides the artist declaration with its own AI label), takedown, distributor notification, or in the worst case, account termination. Under the EU AI Act, repeated or willful misdeclaration could escalate to regulatory enforcement, with fines under Article 99 up to EUR 15 million or 3 percent of global turnover.

What are the penalties under the EU AI Act?

Under Article 99 of the Regulation, non-compliance with transparency obligations (Article 50, which covers AI-generated audio disclosure) can result in administrative fines of up to EUR 15 million or 3 percent of total worldwide annual turnover for the preceding financial year, whichever is higher. Non-compliance with prohibited-practices provisions (Article 5) can result in fines of up to EUR 35 million or 7 percent. Smaller administrative breaches carry lower caps. National supervisory authorities in each EU member state are empowered to issue fines.

Will distributors require AI disclosure at upload?

Yes, this is already happening at TuneCore and Believe, and is rolling out across DistroKid, CD Baby, Amuse, and Ditto through 2026. The expectation is that AI disclosure will be a mandatory field at upload across all major global distributors by August 2, 2026. According to Chartlex's review of distributor public communications in Q1 2026, the trajectory is uniform across the major distributors.

How does the EU AI Act interact with US law?

The EU AI Act is an administrative regulatory regime; the most relevant US analog is the Tennessee ELVIS Act, which is a civil liability regime focused on voice cloning. Federal US AI legislation has not yet passed as of May 2026. The practical guidance for an artist operating in both markets is to assume the stricter regime (the EU AI Act transparency obligation) applies globally, and to additionally comply with the ELVIS Act for any voice-cloning work involving Tennessee-domiciled performers.

Where to Go From Here

The EU AI Act August 2, 2026 deadline is 82 days away as of publication. The compliance work is mostly preparation, documentation, and metadata cleanup, all of which an independent artist can do without legal counsel for the standard cases.

- Music industry AI lawsuits tracker 2026 covers the Sony, Universal, Warner cases against Suno and Udio, the ELVIS Act case law as it develops, and the litigation backdrop driving the Regulation.

- AI music generator comparison 2026 covers how Suno, Udio, ElevenLabs and other tools handle disclosure, watermarking, and metadata.

- How to protect your music from AI cloning in 2026 covers the artist-side documentation, voice-licensing posture, and detection-appeal preparation.

- AI songwriting co-pilots 2026 covers which songwriting tools sit on which side of the disclosure threshold.

- The 2026 state of the indie music industry covers the wider context of where AI regulation sits inside the indie artist's operating environment.

If you want a clean read on whether your current catalog and metadata are positioned for the August 2026 transition, get your free Chartlex audit and we will surface the gaps before the deadline lands.

Free Weekly Playbook

One actionable insight, every Tuesday.

Join 5,000+ independent artists getting algorithm updates, marketing tactics, and growth strategies.

No spam. Unsubscribe anytime.

Get a business health check for your music career.

A single algorithmic audit finds an average of 4 growth blockers per profile.

Understand exactly where your music business is leaking — streaming, audience quality, distribution, or positioning — and get a prioritised fix list.

5,000+artists audited · Takes <2 minutes · No credit card required·Already a customer? Open Dashboard →

Campaign Dashboard

Turn Knowledge Into Action

Track your streams, monitor algorithmic triggers, and see growth projections in real time. The Campaign Dashboard puts everything you just read into practice.

2,400+ artists tracking their growth with Chartlex

About the publisher

About Chartlex

Chartlex is a music promotion company founded in 2018 that has delivered over 100 million verified Spotify streams for independent artists. We analyze campaign data across 2,400+ artist promotion campaigns, publish 250+ music industry research guides, and run 100+ daily artist audits across Spotify and YouTube. Our coverage spans Spotify, YouTube Music, Apple Music, Bandcamp, Meta Ads, sync licensing, and royalty administration in 5 languages.

- Founded

- 20188 years

- Verified streams delivered

- 100M+for indie artists

- Campaigns analyzed

- 2,400+proprietary dataset

- Research guides

- 250+published

- Daily artist audits

- 100+Spotify + YouTube

Platform coverage

Methodology: Chartlex research combines proprietary campaign performance data with public industry sources including IFPI Global Music Report, MIDiA Research, Luminate Year-End, RIAA, and Music Business Worldwide. All findings are refreshed quarterly. Last verified: 2026-05-12.

Keep reading

The complete 5-layer AI music detection stack used by Spotify, Apple Music, Deezer, and YouTube Music in 2026, distributor screening, DSP signal analysis, third-party vendors, label audit, and royalty pool reconciliation.

Daniel Brooks

Apple Music, Deezer, and Spotify just disclosed AI upload share is exploding while AI listening time stays under 1 percent. The canonical 2026 data report on the supply-demand gap.

Daniel Brooks

How the Udio-UMG walled garden actually works: settlement terms, opt-in royalty mechanism, follow-on Warner, Merlin, and Kobalt deals, and what indie artists should do.

Daniel Brooks